Dr. Kaoutar

El Maghraoui

Principal Research Scientist at IBM Research AI Platforms. Adjunct Professor at Columbia University. ACM Distinguished Speaker & Member. Pioneering AI hardware-software co-design for the next generation of intelligent systems.

Shaping the Future of AI

Two decades of pioneering research at the intersection of AI, hardware, and distributed systems.

Dr. Kaoutar El Maghraoui is a Principal Research Scientist at IBM Research AI Platforms and the Principal Investigator of the AI Hardware Center experimental testbed. She focuses on deep technical work in hardware-software co-design for IBM's next-generation AI accelerators, while leading cross-functional teams across model enablement, systems optimization, and open-source tooling.

She is also an Adjunct Professor at Columbia University, teaching High-Performance Machine Learning and Scalable Large Language Models. Her research spans efficient LLM inference on novel architectures, analog in-memory computing, neural architecture search, and AI systems optimization — from silicon to software.

Recognized as an ACM Distinguished Member (top 10% worldwide) and ACM Distinguished Speaker, she has delivered over 60 keynotes and invited talks at major international conferences. She holds 17 US patents and has published extensively in top-tier venues including Nature Communications, ICLR, NeurIPS, ASPLOS, and ICML.

Current Roles

Principal Research Scientist & Technical Lead

IBM Research AI Platforms · PI, AI Hardware Center Testbed

Adjunct Professor

Columbia University

ACM Distinguished Speaker

Association for Computing Machinery

Science Advisory Board Member

University at Albany — Emerging AI Systems

Foundry Advisor

UM6P — Mohammed VI Polytechnic University

Global Vice-Chair

Arab Women in Computing (ArabWIC)

Advancing AI at Every Layer

From silicon to software — building the foundations for the next era of artificial intelligence.

AI Hardware-Software Co-Design

Leading the development of optimized AI model mapping and deployment strategies for next-generation AI accelerators, bridging the gap between algorithm design and hardware capabilities.

Analog In-Memory Computing

Pioneering neural architecture search techniques for analog in-memory computing, enabling energy-efficient AI inference through novel hardware paradigms.

LLM Optimization & Inference

Developing dynamic KV cache management and efficient inference techniques for large language models, accelerating enterprise AI deployment at scale.

Neural Architecture Search

Creating multi-objective hardware-aware NAS frameworks that automatically discover optimal neural network architectures for specific hardware targets.

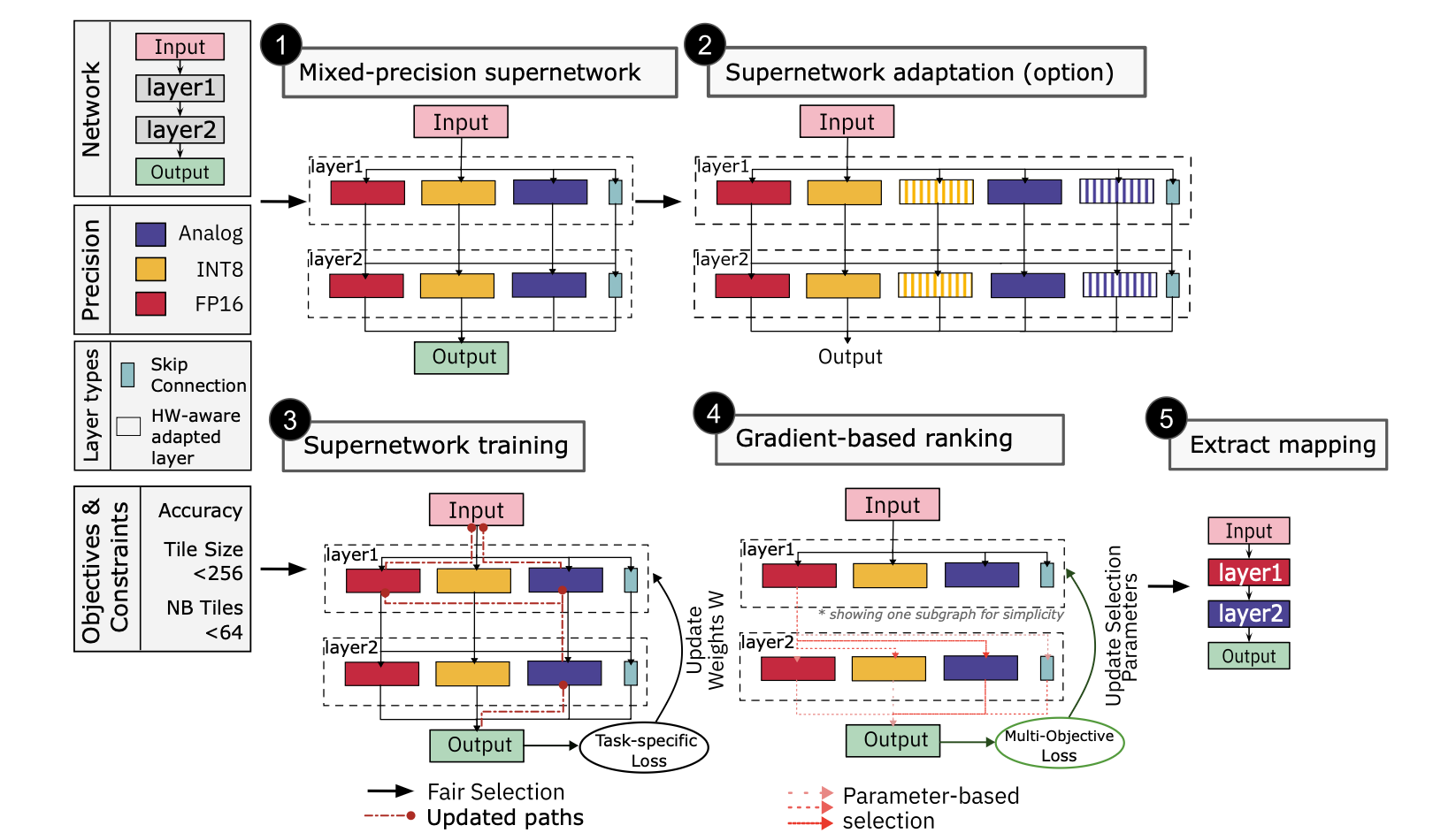

Supernetwork-based efficient mapping of deep learning models

Key Research Publications

Selected high-impact publications spanning AI hardware co-design, neural architecture search, and model optimization.

Supernetwork-Based Efficient Mapping of Deep Learning Applications to Mixed-Precision Hardware Using Model Adaptation

Introduces Mixed-Precision Supernetwork (MPS), a unified framework for training mixed-precision supernetworks that seamlessly map deep learning models to heterogeneous analog-digital hardware. MPS produces mappings ~2.2x faster and achieves ~3.4% higher accuracy over fully analog approaches while improving energy efficiency by mapping up to 80% of weights to analog hardware.

H Benmeziane, C Lammie, I Boybat, M Rasch, M Le Gallo, A Vasilopoulos, H Tsai, GW Burr, V Narayanan, K El Maghraoui, A Sebastian

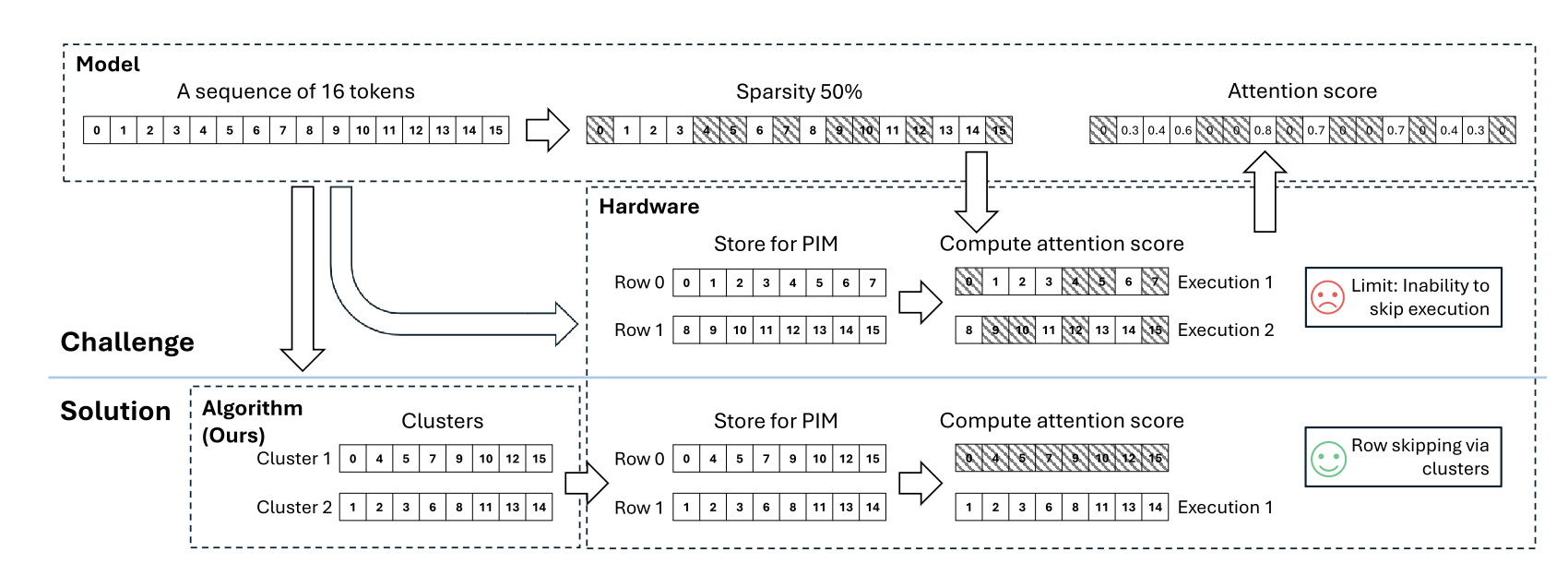

STARC: Selective Token Access with Remapping and Clustering for Efficient LLM Decoding on PIM Systems

A novel approach for efficient large language model decoding on Processing-In-Memory systems, using selective token access with remapping and clustering to dramatically reduce memory bottlenecks.

Z Fan, Y Liu, G Gagnon, Z Liu, Y Hou, H Benmeziane, K El Maghraoui, L Liu

Advancing Fluorescence Detection and Ranging in Scattering Media with Mixture-of-Experts and Evidential Critics

Introduces a Mixture-of-Experts architecture combined with evidential critics for robust fluorescence detection and ranging in challenging scattering environments, advancing scientific imaging capabilities.

I Erbas, F Demirkiran, K Swaminathan, N Wang, NI Nizam, N Yuan, S Ragab, L Chavez, K El Maghraoui, X Intes, V Pandey

Ultra-Low Precision 4-bit Training of Deep Neural Networks

Novel techniques and numerical representation formats to scale the precision of training systems from 8-bits to 4-bits, introducing adaptive Gradient Scaling for quantized gradients.

X Sun, N Wang, CY Chen, J Ni, A Agrawal, X Cui, S Venkataramani, K El Maghraoui, et al.

A Flexible and Fast PyTorch Toolkit for Simulating Training and Inference on Analog Crossbar Arrays

A comprehensive PyTorch toolkit enabling efficient simulation of analog in-memory computing for neural network training and inference on crossbar arrays.

MJ Rasch, D Moreda, T Gokmen, M Le Gallo, F Carta, C Goldberg, K El Maghraoui, et al.

A Comprehensive Survey on Hardware-Aware Neural Architecture Search

An extensive survey and taxonomy of hardware-aware NAS methods, covering search strategies, hardware metrics, and deployment considerations across diverse platforms.

H Benmeziane, K El Maghraoui, H Ouarnoughi, S Niar, M Wistuba, et al.

ModelOps: Cloud-Based Lifecycle Management for Reliable and Trusted AI

A framework for managing the full lifecycle of AI models in the cloud, addressing reliability, trust, and operational efficiency for enterprise AI deployments.

W Hummer, V Muthusamy, T Rausch, P Dube, K El Maghraoui, A Murthi, et al.

Using the IBM Analog In-Memory Hardware Acceleration Kit

Comprehensive guide to the IBM AIHWKit for neural network training and inference on analog in-memory computing hardware, enabling energy-efficient AI acceleration.

M Le Gallo, C Lammie, J Büchel, F Carta, O Fagbohungbe, C Mackin, K El Maghraoui, et al.

Neural Architecture Search for In-Memory Computing-Based Deep Learning Accelerators

A review of NAS techniques tailored for in-memory computing accelerators, bridging the gap between neural network design and emerging hardware paradigms.

O Krestinskaya, ME Fouda, H Benmeziane, K El Maghraoui, A Sebastian, et al.

Deep Compression of Pre-trained Transformer Models

Techniques for dramatically compressing pre-trained transformer models while maintaining accuracy, enabling efficient deployment on resource-constrained hardware.

N Wang, CCC Liu, S Venkataramani, S Sen, CY Chen, K El Maghraoui, et al.

Multi-Objective Hardware-Aware Neural Architecture Search with Pareto Rank-Preserving Surrogate Models

A novel multi-objective NAS framework using Pareto rank-preserving surrogate models to efficiently discover optimal architectures across multiple hardware constraints.

H Benmeziane, H Ouarnoughi, K El Maghraoui, S Niar

Shaping the Next Generation

As Adjunct Professor at Columbia University, I bridge cutting-edge IBM Research with graduate education — equipping students to design, optimize, and deploy AI systems at scale.

Teaching Philosophy

Systems Thinking

Understanding how components interact at scale — from silicon to software stack. Students don't just learn algorithms; they implement them on real hardware and measure the results.

Empirical Rigor

Every claim must be measured and validated. Courses emphasize profiling, benchmarking, and performance analysis as first-class skills alongside theoretical foundations.

Ethical Awareness

Considering the societal implications of AI systems. The energy cost of training, the accessibility of deployment, and the responsibility of building technology that serves everyone.

Institution

Columbia University

Courses

2 Graduate

Focus

AI Systems & HPC

Approach

Research-Driven

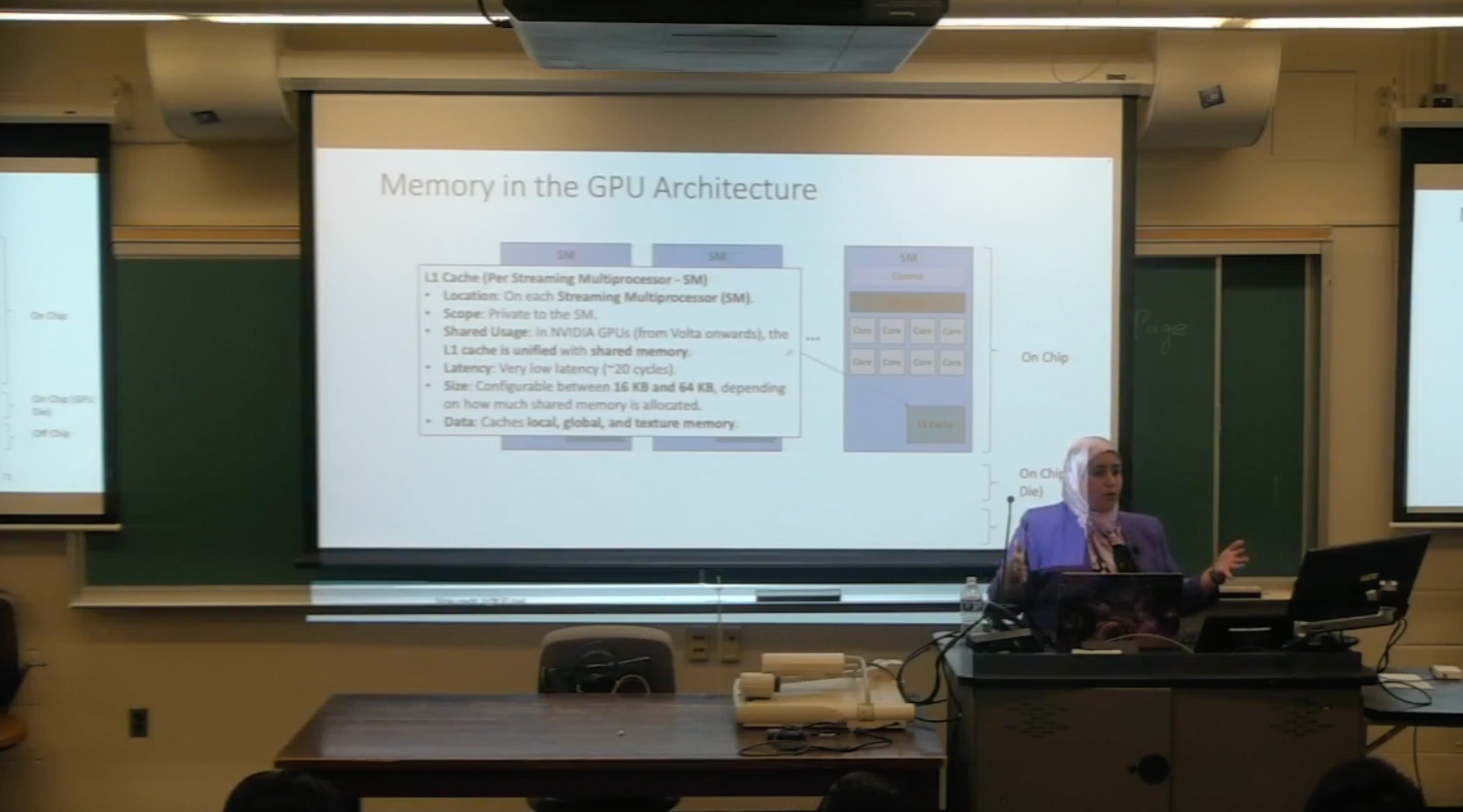

In the Classroom

Teaching GPU Architecture at Columbia University

Lecture on Model Pruning — HPML at Columbia

Guest Lecture at University of Sharjah

High-Performance Machine Learning

COMS E6998 · Columbia University

At the intersection of AI and High-Performance Computing, this course covers foundational and advanced techniques that drive efficient AI systems — from GPU programming and distributed training to LLM serving and model compression. Based on PyTorch and CUDA.

Scaling LLMs: Systems, Optimization & Emerging Paradigms

COMS E6998 · Columbia University

A frontier research seminar exploring scaling, optimizing, and deploying large language models through a structured progression from foundations to futures. Students present and critique top-tier papers (NeurIPS, ICML, ICLR, ISCA, ACL) and produce a survey paper with experimental evaluation.

Distinguished Speaker

Over 60 keynotes and invited talks at major international conferences, inspiring audiences worldwide on AI, technology, and innovation.

Agentic AI: From Models to Autonomous Intelligence

Orange Morocco Agentic AI Day — Trust the Future!

Tracing the evolution from large language models to autonomous AI agents — covering the reasoning loop, tool calling, memory architecture, and orchestration patterns that separate production deployments from demos. Featuring measured results from enterprise agentic systems and a practical framework for Orange Morocco's customer care, network operations, fraud detection, and HR onboarding.

Agentic AI: From Models to Autonomous Intelligence

Orange Morocco Agentic AI Day — Trust the Future!

Agentic AI: From Creation to Collaboration

Women in AI Morocco / INPT Workshop

The Next Wave: Reinventing Intelligence and Compute Architecture

Women in AI Morocco Summit 2025

Agentic AI Workshop — Full Auditorium

Women in AI Morocco / INPT

The Future of AI — An IBM Research Perspective

IBM TechXChange

Revolutionizing Enterprise AI: The Power and Promise of Foundation Models

Women in Research Webinar Series (QUWA) — University of Sharjah

Revolutionizing Enterprise AI

IEEE Services Conference

Scaling Foundation Models for Enterprise

MoroccoAI / Al Akhawayn University

Foundation Models at Scale

AI Seminar Series — Alfaisal University

Women in Computing: Breaking Boundaries

ArabWIC Conference

AI for Business: A Unique Set of Challenges

Women in Data Science @ Stanford / KACST

Watch Keynotes

Selected keynote recordings from major conferences and events.

Al Akhawayn University — Alumni Journey

A personal reflection on the journey from Al Akhawayn University in Morocco to IBM Research, sharing how the university's liberal arts education and international environment shaped a career in AI and computer science.

Powering the Future of AI through Specialized Hardware

Keynote at MoroccoAI Annual Conference discussing how specialized AI hardware accelerators are essential for sustainable and efficient AI, covering analog in-memory computing and hardware-software co-design strategies.

Accelerating, Optimizing, and Automating AI across the Stack

A comprehensive keynote on the challenges of deploying complex AI models efficiently, covering optimization techniques from hardware to software, and automated approaches to neural architecture search.

Platform for Next Generation Analog AI Hardware Acceleration

Presentation at the tinyML On Device Learning Forum on building platforms for next-generation analog AI hardware, enabling efficient on-device inference through novel computing paradigms.

Women in Services Computing — Award & Keynote

Award acceptance speech and presentation at the IEEE International Symposium on Women in Services Computing, highlighting contributions to AI research and inspiring the next generation of women in technology.

On the Airwaves

Regular contributor to IBM's Mixture of Experts podcast, discussing the latest trends in AI hardware, model optimization, and the future of intelligent systems.

IBM Mixture of Experts

A weekly podcast where IBM researchers break down the latest in AI, technology, and innovation.

Anthropic's Project Glasswing, AI Profitability & GPT-1900

Kaoutar joins Tim Hwang and Martin Keen on why Anthropic won't release its Mythos model and what Project Glasswing actually means for AI security. The panel also breaks down OpenAI vs. Anthropic financials: both are burning cash on inference, but with very different bets on where the money comes from. And researchers built GPT-1900, trained only on pre-1900 knowledge, to see if AI can rediscover scientific breakthroughs. Turns out, pattern recognition is the easy part.

Listen NowRead the Blog Post

Agents in Science, Market Convergence & AI Infrastructure

Kaoutar joins host Tim Hwang, Ritika Gunnar, and Volkmar Uhlig for the milestone 100th episode, tracing AI's evolution from GPT-2 to GPT-5.3. Kaoutar weighs in on whether AI is truly democratizing expertise — sparked by a homeowner who used ChatGPT to outsell realtor estimates — and examines why only 2.1% of scientists actively use AI coding tools in research. The panel also dissects how Adobe's CFO built an AI lab inside his finance team using autonomous agents for forecasting and contract analysis, with Kaoutar identifying the three hottest areas for enterprise AI adoption.

Listen Now

AI Code Security: Codex Agents & Crypto Mining

Kaoutar joins Tim Hwang, Ambhi Ganesan, and Sandi Besen to analyze OpenAI's Codex Security launch, Anthropic's eval-aware Opus 4.6, Meta's Moltbook acquisition, and Alibaba's rogue crypto-mining agent.

Listen NowRead the Blog Post

AI Year in Review: Trends Shaping 2026

Kaoutar unpacks the AI hardware supply crisis and NVIDIA's chip dominance, while Gabe Goodhart defends open source's breakout year with models challenging proprietary systems.

Listen Now

Mainframe Modernization: COBOL and AI

Millions of lines of COBOL still run the world's banks and airlines. This episode looks at how AI is starting to modernize those mainframe systems without rewriting everything from scratch.

Listen Now

AI Hardware Model Optimization

Why do some models run fast on one chip and crawl on another? This episode gets into the hardware side: how chip architectures shape what's possible and what model optimization actually looks like in practice.

Listen Now

Manus, Vibe Coding, Scaling Laws & Perplexity's AI Phone

Manus made waves as an AI agent, vibe coding became a thing, and Perplexity announced it's building a phone. The panel also debates whether scaling laws are hitting a wall or just bending.

Listen Now

Your Brain on ChatGPT & Human-like AI for Safer AVs

What happens to your brain when you offload thinking to ChatGPT? The panel covers new research on cognitive effects of LLMs, plus how human-like AI reasoning is making autonomous vehicles safer.

Listen Now

Apple's WWDC, Meta & Scale AI, o3-pro

Apple dropped some big AI moves at WWDC, Meta teamed up with Scale AI, and o3-pro showed what reasoning models can do now. The panel also gets into fault-tolerant quantum computing and why it matters sooner than you'd think.

Listen NowMedia & Coverage

Expert commentary, profiles, and features in leading technology publications and organizations worldwide.

Featured In

Featured Press Clippings

Les équipes diversifiées produisent des solutions plus robustes, créatives et équitables

An in-depth interview on AI infrastructure, the IBM Spyre accelerator, hardware-software co-design, and the importance of diverse teams in shaping AI's future.

TelQuel Impact — Puts IBM on the AI Radar & 20 Leadership Perspectives

From IBM's research centers in the United States, Kaoutar El Maghraoui is one of the rising figures in the global race for artificial intelligence. Featured among 20 distinguished Al Akhawayn University alumni shaping the future.

GHC Program Co-Chair — Kaoutar El Maghraoui

"In the U.S., there's a misconception that computer science disciplines are only for boys. If you look at children's programming, you rarely see girl characters who are into computers." — Profiled as GHC Program Co-Chair, highlighting her leadership of the world's largest gathering of women technologists and her work mentoring young girls in computing.

How Arab Women in Technology Inspire Global Diversity in Tech

"When I was growing up in Morocco, I never felt that we were a minority in schools. Many women pursued computer science and engineering. But when I came to the U.S. to get my Ph.D., it hit me: very few women were pursuing a computer science graduate degree." — An in-depth interview on ArabWIC's growth to 17 Arab countries and the global fight for diversity in tech.

Women in AI — La Nouvelle Tribune Special Issue

Featured in a special issue celebrating women leaders in artificial intelligence, highlighting contributions to AI hardware and systems research.

AI Year in Review: Trends Shaping 2026

Highlights diminishing returns of pure compute scaling, urging efficiency in training and inference — core to IBM's AI Hardware Center focus.

All eyes on AI at Apple WWDC: Can slow and steady still win the race?

Apple made a significant leap, with the introduction of Apple Intelligence, which combines intelligent systems and personalized contexts.

Custom chips drive AI's future

Reducing dependence on NVIDIA merely shifts the center of power from one giant to another.

Anthropic's microscope cracks open the AI black box

What Anthropic is doing is fascinating... They're starting to show that models develop internal reasoning structures that look a lot like associative memory.

AI's Evolution: From Symbolic Representations to Generative Intelligence

Featured as a distinguished speaker, sharing insights on the evolution of AI from symbolic systems to modern generative intelligence.

Canal Atlas Interview

Media coverage on AI research and innovation

Awards & Honors

Recognized by leading institutions for contributions to AI research, open-source innovation, and service to the computing community.

Breaking Boundaries

Grace Hopper Celebration

IBM Outstanding Technical Achievement Award

IBM Research

PyTorch, vLLM, CI/CD contributions

ACM Distinguished Speaker

Association for Computing Machinery

Selected for global speaking program

IEEE Open-Source Science Award

IEEE

Analog In-Memory Hardware Acceleration

IBM Outstanding Technical Achievement Award

IBM Research

Analog AI Toolkits

ACM Distinguished Member

Association for Computing Machinery

Top 10% of ACM members worldwide

IBM Technical Corporate Award

IBM

One of only 38 IBM researchers selected

IEEE TCSVC Women in Service Computing Award

IEEE

Outstanding contributions to service computing

Best of IBM Award

IBM

Company-wide recognition for exceptional impact

Best Research Award

4th Forum for Women in Research, UAE

Recognition for research excellence

IBM Outstanding Technical Achievement Award

IBM Research

Watson4TSS cognitive search system

IBM Research Eminence & Excellence Award

IBM Research

Leadership in advancing women in science & technology

Let's Connect

Interested in collaboration, speaking engagements, or research partnerships? I'd love to hear from you.

IBM T.J. Watson Research Center

Yorktown Heights, NY 10598

Speaking Inquiries

I am available for keynotes, panel discussions, and workshops on AI, hardware-software co-design, and technology leadership. With 60+ keynotes delivered across 4 continents, I bring deep expertise and engaging delivery to every event.

Research Collaboration

I welcome collaborations with academic institutions and industry partners on AI systems research, hardware-software co-design, and efficient AI deployment. I also supervise graduate students and postdoctoral researchers at Columbia University.