DeepSeek's Long March: V4, Sparse Attention, and What Open-Weight AI Just Taught Us

A hardware-aware reading of V4, DeepSeek Sparse Attention, and six lessons the open-weight world should be carrying forward. Companion to the IBM Mixture of Experts episode on V4, Decoupled DiLoCo, and IBM Granite 4.1 + Bob.

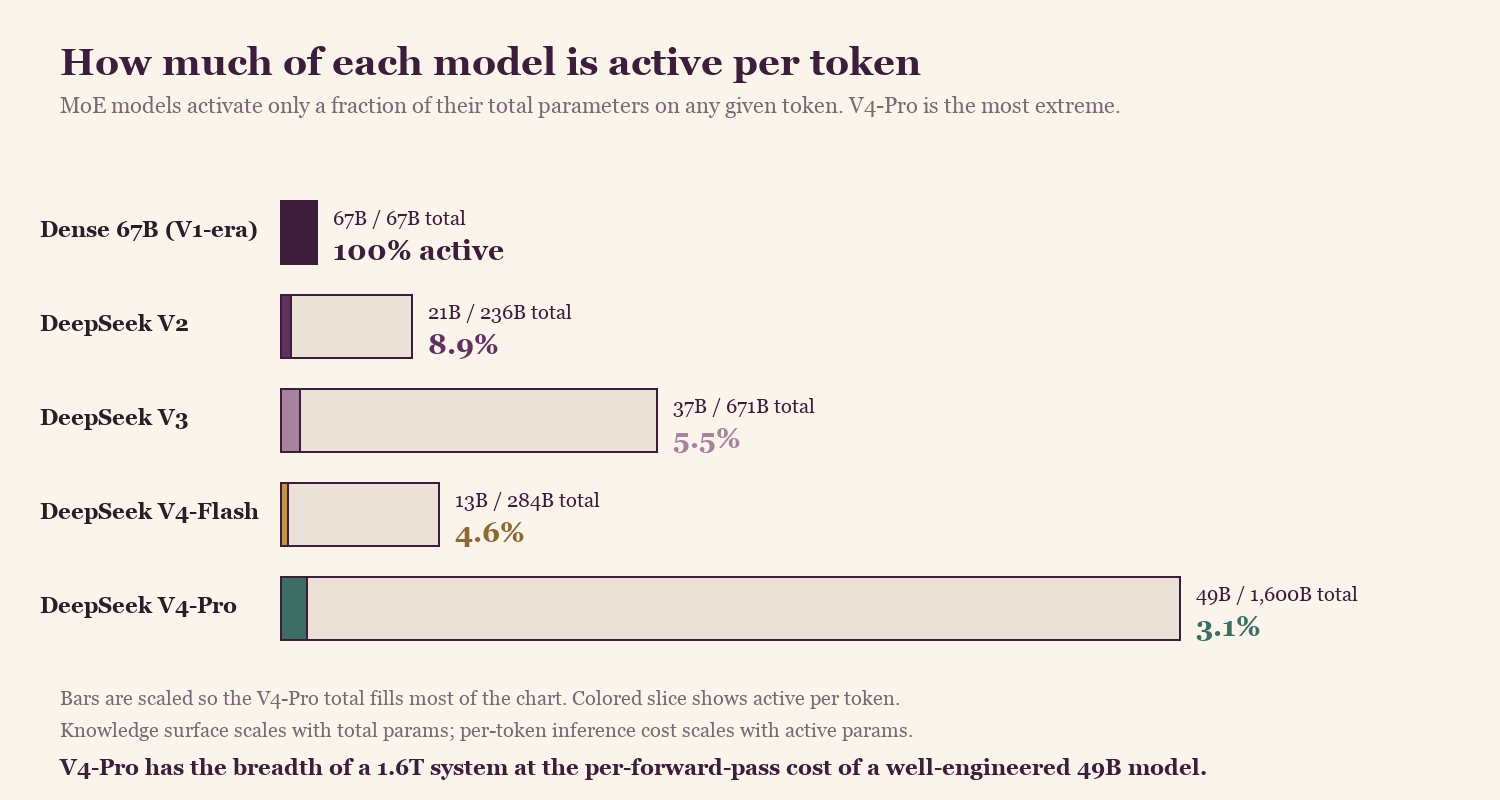

DeepSeek V4 [1] shipped on April 24, 2026, and most of the coverage went straight to the parameter count. 1.6 trillion total. The number I keep thinking about is the second one: 49 billion active per token, in a million-token context, with sparse attention doing the work that dense attention used to do.

The 1.6T number on its own would just be a bigger model. The 49B-active-per-token number is what makes a 1.6T model economically viable to actually run. Both exist because a small Hangzhou lab spent 27 months grinding through architectural bets that, taken together, quietly rewrote the economics of open-weight frontier AI.

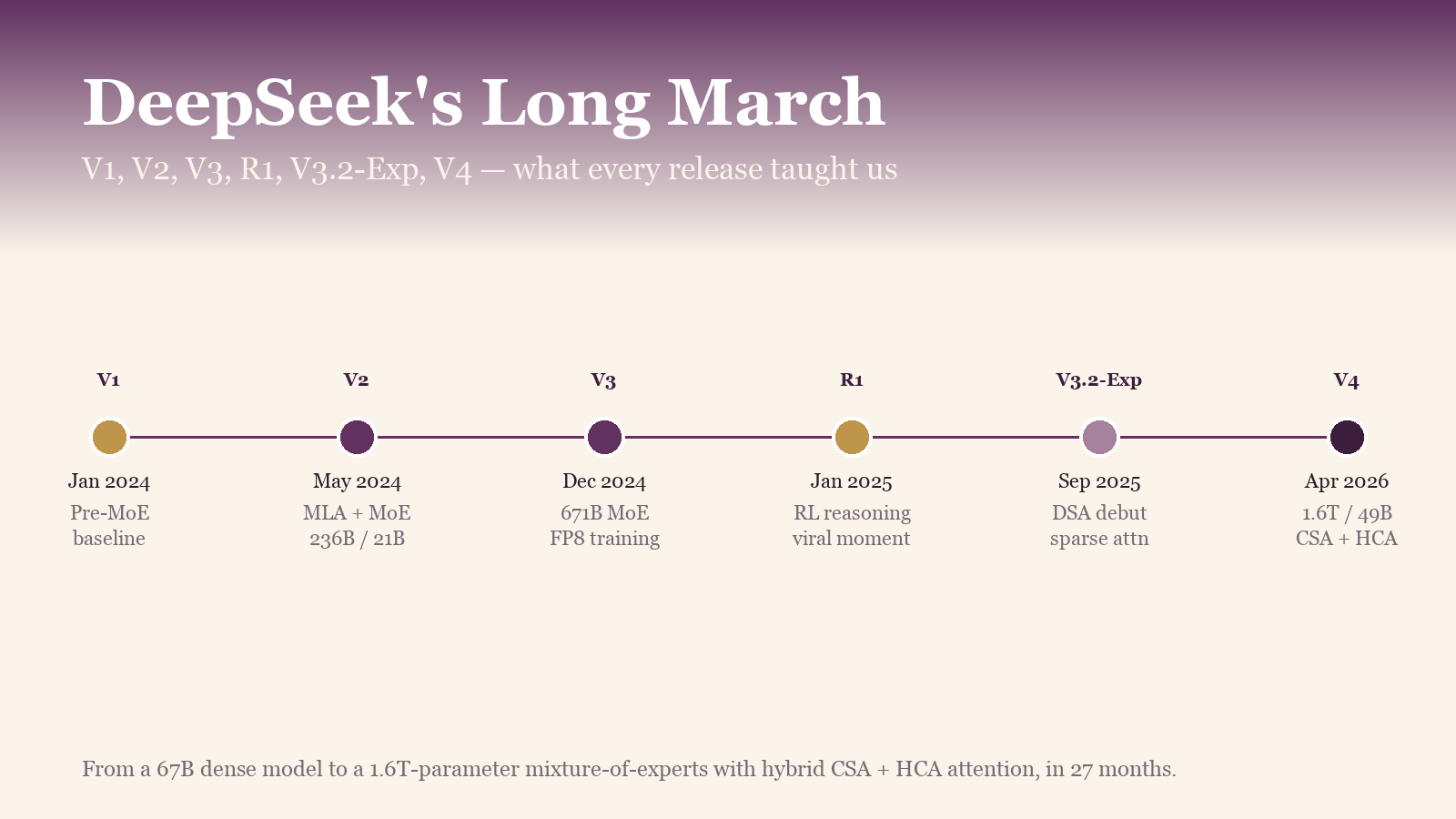

The 27-month arc, in six releases

From a 67B dense model to a 1.6T-parameter MoE with sparse attention as the default. The architecture changed every release. The thesis didn't.

From a 67B dense model to a 1.6T-parameter MoE with sparse attention as the default. The architecture changed every release. The thesis didn't.

Six releases, each pushing a different idea. The strategy gets clearer with each one.

V1 (early 2024) was a 67B dense model, conventional architecture, no MoE. Useful but not differentiated. The team was building experience with the training and serving infrastructure.

V2 (May 2024) is where the architecture changed. DeepSeek-V2 introduced Multi-head Latent Attention (MLA) [2], which compresses the KV cache into a low-rank latent vector, and combined it with DeepSeekMoE, their sparse expert architecture. Headline numbers: 236B total / 21B active, 128K context, 93.3% less KV cache and 5.76x higher generation throughput compared to a dense 67B baseline. This is the point where the lab stopped optimizing for model size and started optimizing for inference cost.

V3 (December 2024) scaled the same approach to 671B / 37B active and pre-trained the entire forward and backward pass in FP8 from step zero [3]. 2.788M H800 GPU-hours total. Not the largest model in the world, but the most efficient frontier-scale training run anyone had published, and a direct counter to the "we need more H100s" story that dominated US AI conversation at the time.

R1 (January 2025) is when DeepSeek became widely known outside AI research. R1 [4] showed reasoning capabilities could be incentivized through pure reinforcement learning, no labeled chain-of-thought data required. AIME 2024 pass@1 jumped from 15.6% to 71.0%. Closed-API prices started dropping within weeks. Functionally a market event as much as a model release.

V3.1 / V3.2-Exp (September 2025) introduced DeepSeek Sparse Attention (DSA) [5], the architectural change that cut the quadratic cost of long context down to roughly linear. V3.2-Exp was explicitly experimental, but the path to V4 was already obvious to anyone watching the arXiv feed.

V4 (April 24, 2026) [1] ships DSA as the production default, scales the MoE to 1.6T / 49B active, and makes 1M context the default tier across web, app, and API, available at the baseline pricing rather than as a premium add-on.

Six releases. One thesis: make the parts of the model that aren't doing work as small and as skippable as possible, then scale.

Start with MLA, not DSA

Multi-head Latent Attention (MLA) [2] shipped with DeepSeek-V2 in May 2024, two years before V4. Every later DeepSeek model is built on top of it, DSA included. If you want to understand why DeepSeek's inference cost keeps dropping while other labs' inference cost stays the same, this is the architectural choice to look at first.

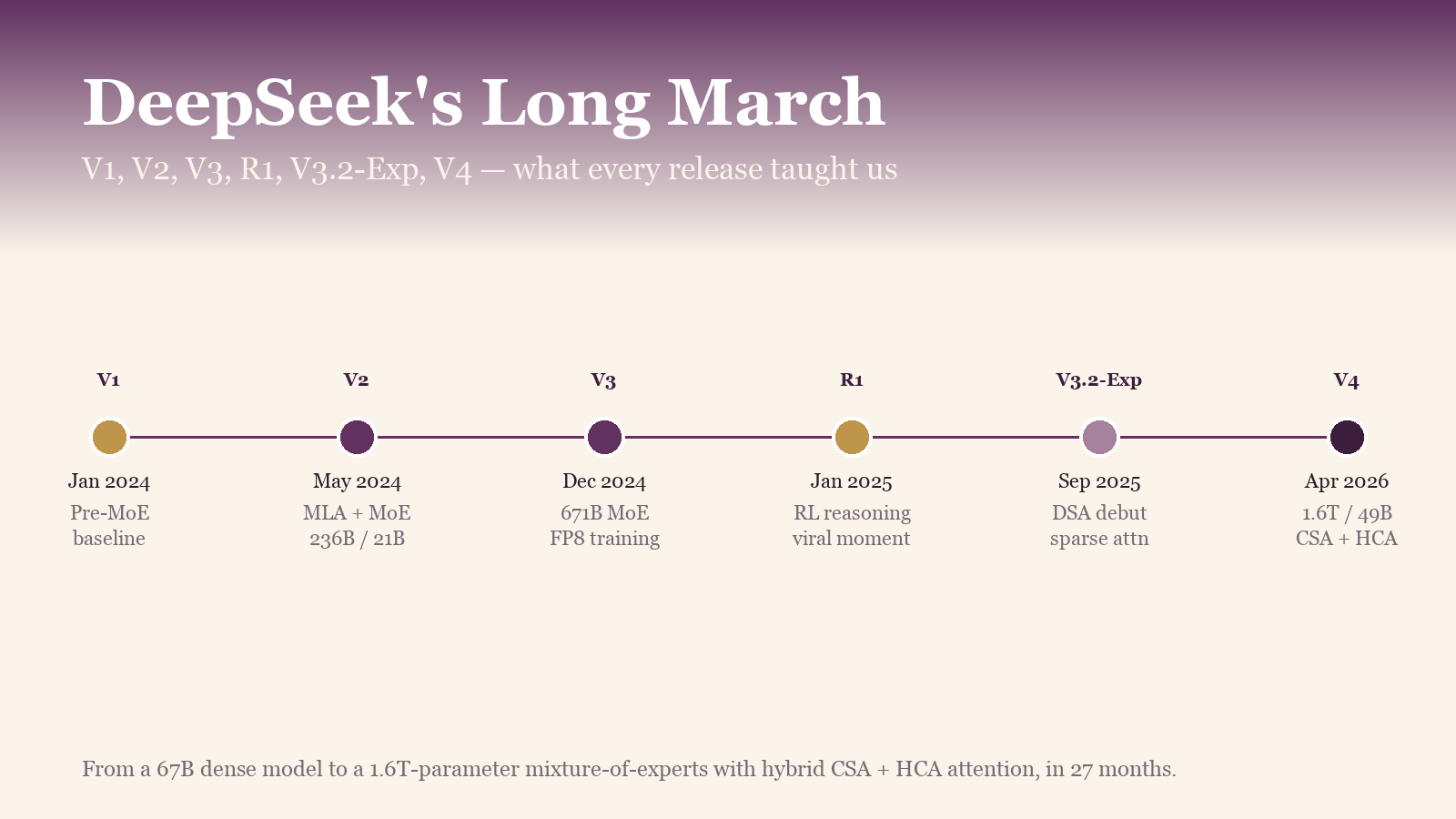

The problem MLA solves: in a standard transformer with Multi-Head Attention (MHA), every attention head stores its own K and V vector per token in the KV cache. A 70B-class model with 64 heads stores 128 cache entries per token. Run that out to 128K context and the cache is in the hundreds of gigabytes per serving session. An H100 with 80GB of HBM (high-bandwidth memory) can fit maybe one conversation. That isn't a serving constraint; it's the reason MLA was necessary.

Llama 3 partially addressed this with Grouped-Query Attention (GQA), where several query heads share a single K/V head. DeepSeek-V2 used a different approach. It compresses K and V together into a single low-rank latent vector per token, and reconstructs the per-head K and V on demand at attention time.

Standard MHA stores K and V for every head on every token. MLA stores one low-rank latent vector per token and reconstructs the per-head K and V when attention asks for them. DeepSeek-V2 reported a 93% reduction in KV cache size with no loss in capability.

Standard MHA stores K and V for every head on every token. MLA stores one low-rank latent vector per token and reconstructs the per-head K and V when attention asks for them. DeepSeek-V2 reported a 93% reduction in KV cache size with no loss in capability.

A few non-obvious things follow.

1M context is economically viable because of MLA, not DSA. Pre-MLA, the KV cache at a million tokens would have been hundreds of gigabytes per serving session. Post-MLA, it's roughly a fifteenth of that. That's the floor under everything else. DSA stacks on top.

DSA works because MLA already shrunk the cache. DSA in V3.2 and V4 runs directly on top of MLA in multi-query mode. The top-k selector operates over the latent representations, and the lightning indexer can run cheaply in FP8 specifically because the cache is already compressed and shared across query heads. Apply DSA to standard MHA instead, and the indexer has to re-rank every head independently; the kernel implementation becomes substantially harder to make efficient.

Adding MLA support is the hardest part of porting any DeepSeek model. Production MLA kernels in vLLM and SGLang landed over a substantial multi-release effort after V2 shipped, not in a single drop. Different memory layout, different projection geometry, different math at the matmul boundary.

In our own work porting MLA-class attention to the Spyre AIU, the friction point we ran into is that MLA's pattern — store the compressed latent, project up to per-head K and V at attention time — doesn't map cleanly to the cache-and-attend kernel shapes that exist in most serving runtimes. The fused decompress-then-project step has to be a new primitive, with its own tiling decisions and its own numerics, and that one change ripples through the inference graph: memory layout for the latent cache, how the indexer reads it in DSA mode, where the dequant-and-project work lives relative to the softmax. MLA is where most of the kernel engineering goes when you're bringing up a non-NVIDIA accelerator for these models.

FP8 native training, and why it's harder than it sounds

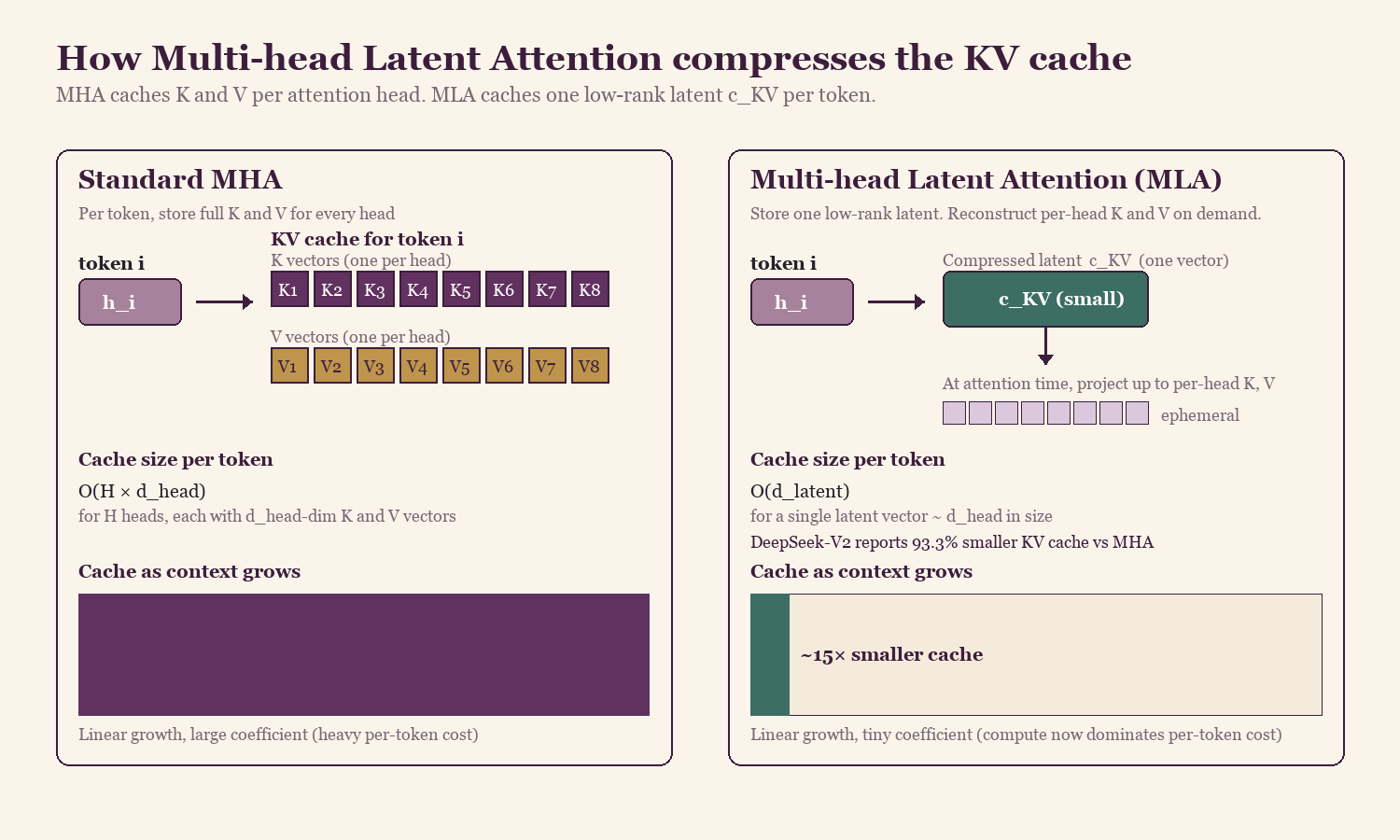

The other big V3 contribution gets less attention than it deserves: DeepSeek pre-trained the entire forward and backward pass of a 671B-parameter MoE in FP8, from step zero [3]. This is hard. FP8 formats (E4M3 and E5M2) expose extremely limited exponent and mantissa precision compared to BF16 or FP16, which makes the scaling strategy critical: any naive approach will under- or over-flow somewhere in the network, and the loss diverges.

Why fine-grained scaling fixes this: if your matrix has values from 0 to 1000 with a few outliers around 5000, a single per-matrix scale either saturates the outliers or rounds the small values to zero. Slice the matrix into small tiles, give each tile its own scale, and most tiles end up with a tight dynamic range that fits cleanly into FP8. The outlier tiles get their own larger scale and stay representable.

DeepSeek's specific approach: every 1×128 row of activations gets its own scale factor. Every 128×128 block of weights gets its own scale factor. The running sum stays in FP32 (32-bit) inside the CUDA cores at the matmul boundary so precision isn't lost during accumulation.

Activations scale per row-tile of 128 elements. Weights scale per 128×128 square tile. Accumulation runs in FP32 inside the CUDA cores. The relative loss delta versus a BF16 baseline stays under 0.25% across training.

Activations scale per row-tile of 128 elements. Weights scale per 128×128 square tile. Accumulation runs in FP32 inside the CUDA cores. The relative loss delta versus a BF16 baseline stays under 0.25% across training.

The result: 2.788M H800 GPU-hours end-to-end on V3, roughly half what comparable-capability dense models needed at the time. FP8 matrix multiplies run at roughly 2× the throughput of BF16 on Hopper tensor cores, and HBM traffic drops nearly proportionally. That's the difference between "we trained a frontier model" and "we couldn't afford to."

Two more V3 innovations are worth knowing about, both of which carry into V4. Auxiliary-loss-free load balancing replaces the load-balancing loss most MoE papers still use with a bias-based routing strategy: cleaner training, no extra loss term competing with the main language modeling loss. And Multi-Token Prediction (MTP) trains the model to predict multiple future tokens per step. The same MTP heads double as a built-in speculative decoder at inference time. The model drafts several tokens ahead and verifies them in parallel, which is how V4 serves agentic coding workloads at the speed it does.

What DSA actually does, and why it's a hardware story

Sparse attention has a long history: Longformer, BigBird, sliding-window patterns in Gemma 3 and Olmo 3. DeepSeek's contribution isn't the idea. It's two specific engineering choices that make DSA, to my knowledge, one of the first openly documented sparse-attention systems deployed as the default path at frontier scale.

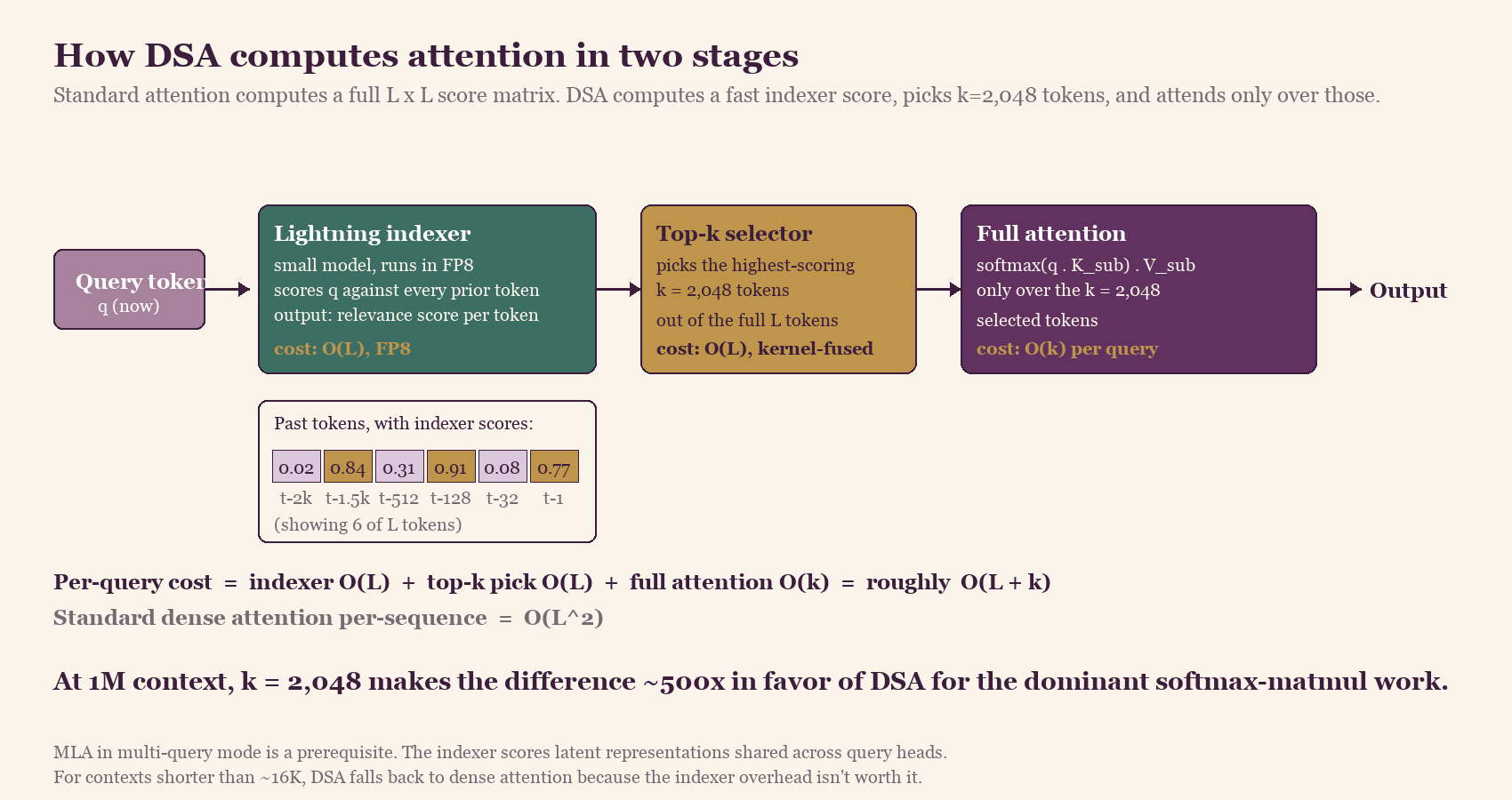

The two-stage indexer. Standard attention computes a similarity score between each token and every other token. DSA replaces the expensive part with a fast scoring pass: a small indexer model running in FP8 computes a quick relevance score between the current token and every prior token. A top-k selector then picks the k=2,048 highest-scoring tokens. Expensive full attention runs only over those.

V3.2-class DSA: each query token goes through a cheap FP8 indexer that scores every prior token, a kernel-fused top-k step that picks the 2,048 most relevant ones, and full attention computed only over that subset. The dominant attention cost is reduced from quadratic to near-linear in the context length for fixed k. V4 extends this with an FP4 indexer and a CSA + HCA hybrid; see the next section for the V4-specific architecture.

V3.2-class DSA: each query token goes through a cheap FP8 indexer that scores every prior token, a kernel-fused top-k step that picks the 2,048 most relevant ones, and full attention computed only over that subset. The dominant attention cost is reduced from quadratic to near-linear in the context length for fixed k. V4 extends this with an FP4 indexer and a CSA + HCA hybrid; see the next section for the V4-specific architecture.

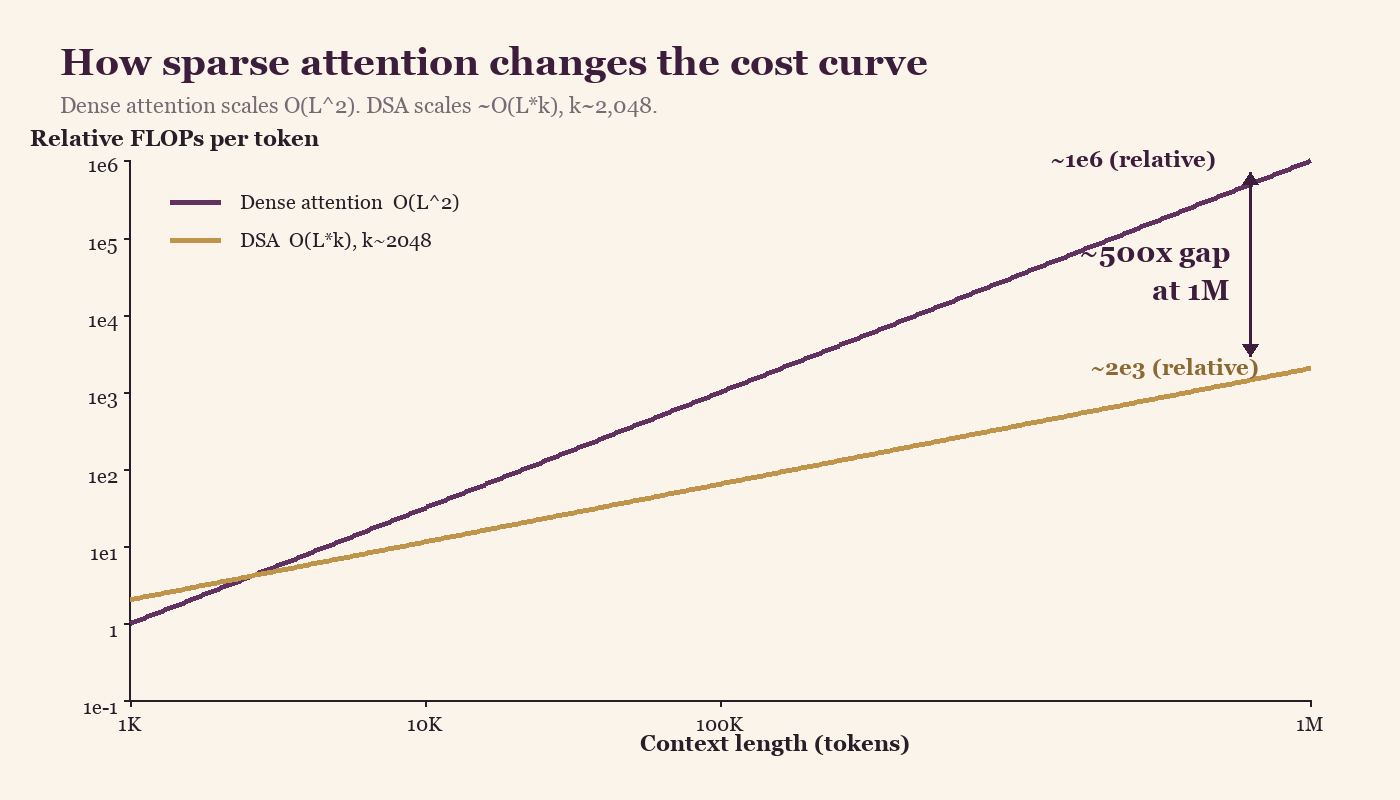

The complexity story: standard attention is O(L²) in sequence length. DSA reduces the dominant quadratic behavior — the expensive softmax-and-matmul work runs over only ~2,048 tokens instead of all L. There's still O(L) work in the indexer pass and the top-k step, but those run in FP8 at much lower per-token cost, and the math works out to effectively near-linear scaling in practice for the configurations DeepSeek targets.

Relative FLOPs per token on a log-scale y-axis (each gridline is 10×). Dense attention scales quadratically with context length. DSA's dominant attention cost scales near-linearly because the expensive softmax-and-matmul work runs over a fixed k. At 1M context, the gap in the dominant term is roughly 500× in favor of DSA — note that the y-axis is logarithmic, so the visual gap is compressed relative to the actual ratio.

Relative FLOPs per token on a log-scale y-axis (each gridline is 10×). Dense attention scales quadratically with context length. DSA's dominant attention cost scales near-linearly because the expensive softmax-and-matmul work runs over a fixed k. At 1M context, the gap in the dominant term is roughly 500× in favor of DSA — note that the y-axis is logarithmic, so the visual gap is compressed relative to the actual ratio.

The hardware co-design. What most coverage misses: DSA isn't a software-only change any serving stack can adopt. The indexer runs in FP8 because Hopper tensor cores have an FP8 path. The variable per-query sparsity pattern requires custom CUDA kernels, because standard attention kernels can't handle "different k tokens per query" efficiently. It's built on top of MLA in multi-query mode so the KV cache stays compressed across queries. For short contexts it falls back to dense attention because the indexer overhead isn't worth it below a threshold length.

That last detail matters more than it sounds. DSA uses sparse attention when sparse attention is faster, dense when it isn't, with the switch happening inside a kernel designed for the accelerator's memory hierarchy. Retrofitting it into vLLM or TensorRT-LLM requires substantial kernel-level changes to those runtimes — not impossible, but more than a configuration flag.

DSA shipped first in V3.2-Exp in September 2025, hardened in V3.2 in December 2025, and continued to evolve through V4 in April 2026. Seven months from experimental to default. That's a team that ships kernels as production code, not as paper figures.

What V4 actually changed beyond V3.2's DSA

The description above is V3.2-class DSA, which is the foundation. V4 went further. The official V4 technical report describes a hybrid attention scheme that interleaves two related mechanisms across the layers of the model. (A note on what's documented versus inferred: the mechanism descriptions below are taken from DeepSeek's V4 technical report. Some of the serving-stack implications I draw at the end of this section are my read of the architecture rather than something DeepSeek explicitly documented.)

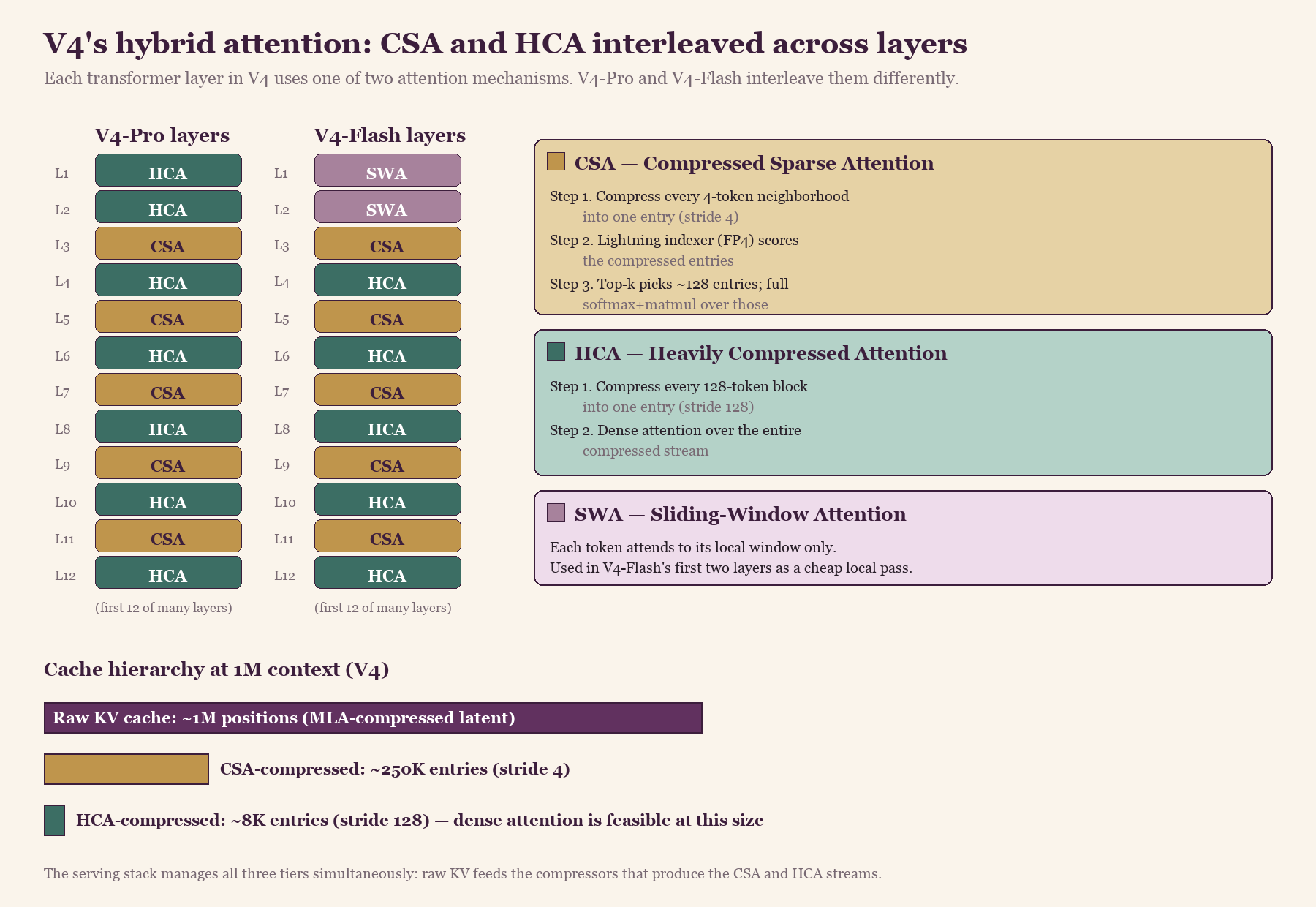

Compressed Sparse Attention (CSA) is the direct descendant of V3.2's DSA, but the indexer now scores over already-compressed entries. A learned token-level compressor with stride 4 condenses every neighborhood of tokens into a single entry, and the lightning indexer picks roughly 128 of those compressed entries per query for the expensive softmax-and-matmul step. The indexer itself moved from FP8 in V3.2 down to FP4 (MXFP4) in V4, with FP4 quantization-aware training keeping accuracy stable. That's another halving of bytes per indexer activation on top of everything DSA already did.

Heavily Compressed Attention (HCA) is more aggressive. It consolidates KV entries with stride 128 — at 1M context, that turns the cache from ~1M positions into roughly 8K compressed entries. The cache is small enough at that point that dense attention over the entire compressed stream is feasible again. HCA gives the model a holistic, low-resolution view of the full context that complements CSA's precise sparse retrieval over selected regions.

V4-Pro uses HCA for its first two layers and then alternates CSA and HCA through the rest of the model. V4-Flash starts with sliding-window attention before falling into the same CSA/HCA alternation. Different layers do different things: some get the precise sparse retrieval pattern of CSA, others get the holistic global view of HCA.

V4-Pro and V4-Flash both interleave Compressed Sparse Attention and Heavily Compressed Attention layer-by-layer. The serving stack manages three cache tiers simultaneously: raw KV at ~1M positions, CSA-compressed at ~250K entries (stride 4), and HCA-compressed at ~8K entries (stride 128).

V4-Pro and V4-Flash both interleave Compressed Sparse Attention and Heavily Compressed Attention layer-by-layer. The serving stack manages three cache tiers simultaneously: raw KV at ~1M positions, CSA-compressed at ~250K entries (stride 4), and HCA-compressed at ~8K entries (stride 128).

The practical consequence for anyone building inference infrastructure on top of V4: the cache is now multi-level. Raw KV entries, CSA-compressed entries, and HCA-compressed entries all coexist, and the serving stack has to manage three different staleness and precision tiers. The kernel surface area is meaningfully larger than V3.2's, and the FP4 indexer in particular needs hardware that handles MXFP4 efficiently — which currently means Blackwell or Hopper-class with software emulation.

Memory bandwidth is the common target

The four innovations cut cost on different axes, but the underlying point of all of them is the same. Modern LLM serving is increasingly memory-bandwidth-bound rather than compute-bound: generating a token spends most of its time streaming weights and KV state from HBM into the compute units, not doing math. Each architectural choice in V4 reduces bytes moved per generated token. MoE activates only a small fraction of the parameters, so fewer weights cross HBM. MLA compresses the KV cache by ~15×, so fewer cache bytes cross HBM per attention step. FP8 halves the bytes per tensor for both weights and activations. DSA cuts the number of KV entries that the expensive attention path actually touches. Fewer FLOPs is a side effect; reducing memory traffic is what each of them is really doing.

How the gains compound

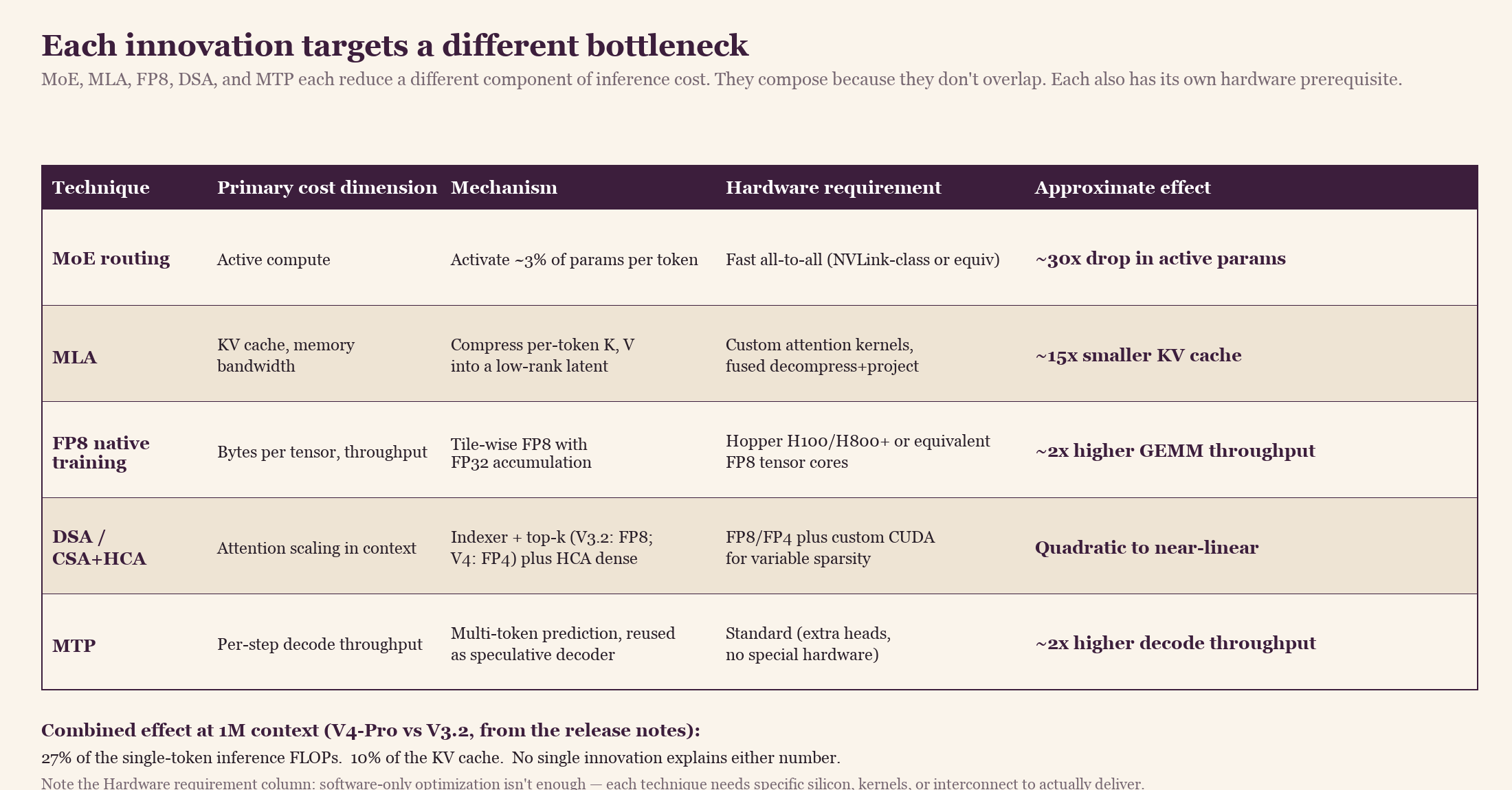

Each of these innovations would be a respectable paper on its own. The reason V4 performs as well as it does is that they target different bottlenecks and compose. MoE acts on active compute. MLA acts on KV cache size and the memory bandwidth that pays for it. FP8 acts on bytes per tensor for both weights and activations. DSA acts on attention's scaling in context length.

Each architectural choice cuts inference cost on a different axis, and they stack multiplicatively. The DSA column is the V4-Pro number at 1M context relative to V3.2, taken from the V4 release notes. Earlier columns indicate the rough magnitude of each contribution, not exact figures.

Each architectural choice cuts inference cost on a different axis, and they stack multiplicatively. The DSA column is the V4-Pro number at 1M context relative to V3.2, taken from the V4 release notes. Earlier columns indicate the rough magnitude of each contribution, not exact figures.

DeepSeek's V4 release notes state that V4-Pro needs 27% of the single-token inference FLOPs and 10% of the KV cache of V3.2 at 1M context. DSA alone isn't 4× better than what came before; the gain compounds. MLA had already compressed the cache. MoE had already gated 97% of the parameters off. FP8 had already halved the bytes per tensor. DSA on top of all of that turns the remaining quadratic context cost linear. Each layer of the stack depends on the one below it. Remove any one and the savings disappear.

The economics of sparse activation

V4-Pro activates roughly 3% of its parameters per token. The model knows what a 1.6 trillion-parameter system knows; the inference cost is closer to a 49B model.

V4-Pro activates roughly 3% of its parameters per token. The model knows what a 1.6 trillion-parameter system knows; the inference cost is closer to a 49B model.

When people ask me whether closed labs can match this, my answer keeps coming back to the same number. V4-Pro activates 49 billion parameters out of 1.6 trillion per token. Roughly 3%. The model has the capability of a 1.6T system at roughly the inference cost of a well-engineered 49B dense model.

This is the structural reason DeepSeek's aggressive sparsity strategy puts downward pressure on closed-API pricing. Closed labs likely run their own sparsity and MoE optimizations internally, but the available open weights at comparable capability put a hard floor under what any commodity-tier API can charge. The 2026 Stanford HAI AI Index documents the pattern: every time DeepSeek ships a competitive open model, closed-API prices drop within weeks [6].

The gap between hype and reality

The coverage on V4 says "everyone is switching to DeepSeek." The reality is more layered.

V4-Pro at 1.6T parameters is too large for most enterprises to self-host. It needs a multi-GPU cluster, minimum 4 to 8 H100s. Real deployment splits across three tiers, and the flagship is a smaller part of the picture than the coverage suggests:

- Hyperscaler-managed. AWS Bedrock, Azure AI Foundry, Google Vertex AI all host DeepSeek's earlier models. None have announced V4-Pro hosting at the time of writing (it's been live for five days). Real adoption numbers aren't disclosed.

- Specialized inference providers. Together.ai, Fireworks, OpenRouter, Replicate. This appears to be where most non-Chinese DeepSeek usage happens in practice, through OpenAI-compatible APIs.

- Distilled and self-hosted variants. DeepSeek-R1-Distill-Llama-70B has likely become the practical default for local reasoning workloads, with the Qwen-32B distill close behind for smaller hardware footprints. This is what US enterprises typically deploy when they want the capability but don't want to send data to DeepSeek's API directly.

The most concrete V4 enterprise integration so far is Aurora Mobile (NASDAQ: JG), which integrated V4 Preview into its GPTBots.ai platform on launch day for enterprise RAG over CRM/ERP data. US-listed, day-one. That's proof it can be done, not proof of widespread adoption.

The honest summary: V4-Pro is more of a capability demonstration than a production model. The real adoption story is V4-Flash (284B / 13B active), which is self-hostable on a multi-GPU setup most mid-size teams can afford, and the distilled variants that fit on a single high-end server. The flagship gets the coverage; the second-tier model gets deployed.

Six lessons worth carrying forward

The arc from V1 to V4 contains specific lessons that I think generalize beyond DeepSeek, and that I find myself coming back to whenever I talk to my Columbia students or when I think about what the Spyre accelerator stack at IBM should optimize for next.

1. MoE made parameters cheap. Sparse attention is making context cheap.

These are the same trick at different layers. MoE says: scale the parameter count, activate a small fraction per token. DSA says: scale the context length, attend to a small fraction per query. Both turn a quadratic-or-worse cost into something roughly linear. Both require the hardware to actually skip the inactive part — sparsity that the silicon can't exploit is just a math claim, not a speedup.

The next opportunity after these two will be the same idea applied to a different dimension. Activation sparsity within feed-forward layers (Deja Vu, contextual sparsity) is one candidate. KV cache eviction along the sequence dimension (H2O, SnapKV) is another. The pattern is clear: find a dimension that's dense in the model and sparse in reality, then design a kernel that takes advantage of the gap.

2. Hardware co-design beats pure algorithmic cleverness, every time.

DSA could have been "we ran a top-k selection in PyTorch and it works in principle." That version probably gets accepted at a conference. It does not get shipped as the production default at frontier scale.

What DeepSeek did instead: FP8 indexer because the tensor cores have an FP8 path; custom CUDA kernels for variable per-query sparsity because the existing kernels can't handle it; MLA in multi-query mode so KV cache compression compounds with attention sparsity; dense fallback for short contexts because the indexer overhead doesn't pay below a threshold. Every choice is hardware-aware.

For those of us working on accelerator stacks (on the IBM side, that's what I do day to day with torch-spyre and the Spyre AIU), this is the right reference architecture. Pure-software optimization on top of a generic accelerator doesn't get you there; the hardware path has to be designed around the same sparsity pattern the algorithm relies on.

3. Open weights with permissive licensing won the commoditization race.

It already happened. Every time DeepSeek (or Qwen, or Mistral, or now Zhipu's GLM-5) ships a competitive open model, closed-API prices drop within weeks. Stanford HAI has been documenting this in the AI Index for three consecutive years [6].

The "can open labs survive against closed frontier labs" question is the wrong one to be asking now. Open labs have already won on commoditization. Closed labs can no longer charge premium prices on the basic API tier.

4. Vertical specialization is what comes next.

We're past the era where every open lab tries to train a general-purpose frontier model. Each one specializes:

- DeepSeek leads on the sparse-attention architecture story and frontier-scale efficiency.

- Alibaba Qwen 3.6 Plus is marketed primarily for agentic coding and reasoning, with the strong multilingual support the Qwen line has always had as a secondary axis.

- Mistral Small 4 focuses on European, GDPR-friendly, edge-deployable models.

- Zhipu's GLM-5 is reportedly trained at large scale on Huawei Ascend infrastructure, demonstrating the growing feasibility of non-NVIDIA training stacks for frontier models.

- Google's Gemma 4 targets mobile and edge devices — a tighter hardware envelope than laptops.

- IBM's Granite 4.1 focuses on enterprise governance, a modular family, and calibrated uncertainty.

Open-weight AI isn't dying. It's segmenting into a set of labs each owning one or two capability axes, which is healthier than the all-or-nothing race that dominated 2024.

5. The hype gap is also the deployment gap.

V4-Pro is more of a capability demonstration than a production model. V4-Flash is what gets deployed. Distilled variants are what US enterprises run. This pattern repeats across labs and releases.

The implication for anyone doing enterprise architecture: plan for the second-tier model, not the flagship. The flagship sets the capability ceiling and the marketing tone. The second-tier model, typically the 200–400B MoE with 13–25B active, is what ends up in production. The same pattern appears to hold for closed-API stacks, where the cheaper variant likely handles the large majority of traffic.

6. Hardware decoupling from NVIDIA is real.

Reporting around V4 suggests DeepSeek had to re-adapt the model to Chinese-made chips, which delayed the release by months. Reports around Zhipu's GLM-5 and other Chinese frontier models suggest that large-scale training on non-NVIDIA accelerator stacks is becoming increasingly viable — particularly Huawei's Ascend line, which SemiAnalysis has covered in depth [7]. Export restrictions are accelerating investment in alternative hardware ecosystems, and the trend is unlikely to reverse.

The work involved is not just kernel re-adaptation. The real friction sits at the interconnect layer. NVIDIA's stack assumes NVLink and NVSwitch between accelerators on the same node and within a rack, giving you fat, low-latency all-reduce and all-to-all primitives that MoE routing and KV-cache sharding take for granted. Huawei's Ascend uses its own HCCS interconnect with the HCCL collective library; PCIe-based alternatives have lower per-link bandwidth and different topology. Porting a DeepSeek-class model to a non-NVIDIA fabric means rewriting the expert-routing path, the sharding strategy for the KV cache, and often the pipeline-parallel schedule, in addition to the kernels. The interconnect is where most of the hardest engineering ends up.

That's still good news for everyone who isn't NVIDIA. A monoculture where every stack optimizes for one vendor's specific design choices produces fragile software. With DeepSeek's architecture now running on multiple hardware substrates, and the broader push toward non-NVIDIA training accelerators gaining traction, the design space opens up for every accelerator group working on frontier-scale workloads. Including ours at IBM Research on Spyre.

What changes because of 1M context as the default

One specific implication deserves attention because it changes a design decision that's been settled in enterprise architecture for three years.

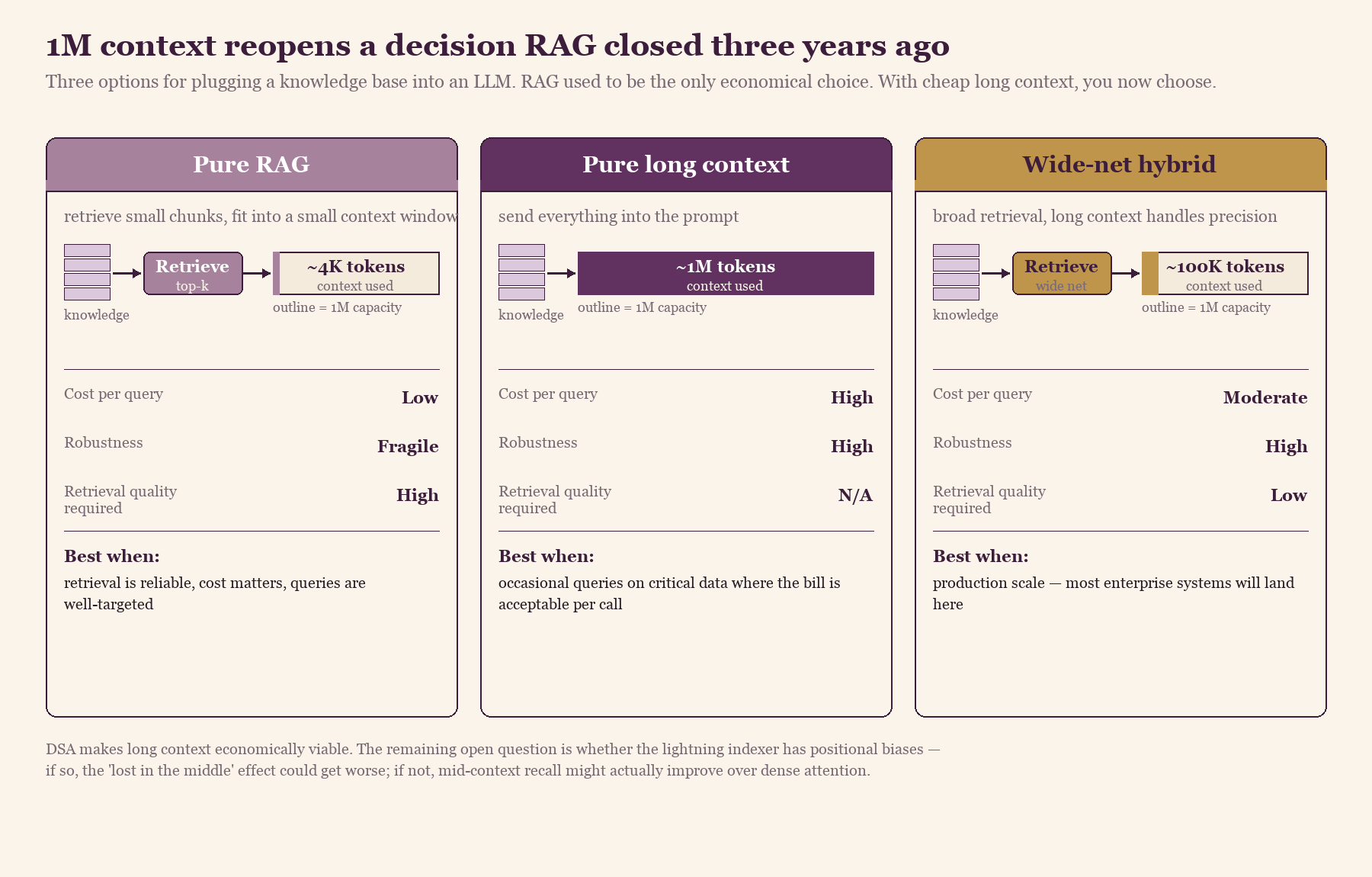

When DeepSeek makes 1M context the default tier, every enterprise that built a RAG pipeline in the last two years has to revisit a question they thought was answered. RAG won three years ago because context was expensive. Quadratic attention meant doubling context quadrupled cost; putting 500K tokens into a prompt was financially impractical. So everyone built retrieval pipelines: chunk the knowledge base, embed it, search for the relevant chunks, send only those into a small context window.

DSA changes that economics. The expensive softmax-and-matmul step runs over a fixed ~2,000 tokens regardless of context length. The lightning indexer still scans every prior token, but it runs in low precision at a tiny per-token cost. The total per-query work goes from O(L²) to roughly O(L + k), where the dominant term is no longer the softmax. There are now three options where there used to be one:

- Pure RAG. Keep retrieving small chunks. Cheap and fast, but fragile. If retrieval misses the relevant chunk, the model never sees the answer and confidently makes one up.

- Pure long context. Send everything into the prompt. Simple and reliable. The cost is the catch: you pay for ~1M tokens per query, which adds up at scale.

- Wide-net hybrid. Use retrieval to fetch a much larger window of plausibly relevant material (say, 100K tokens instead of 4K), then let long context handle precision. Most production systems will end up here.

Each card uses the same context-capacity outline (representing 1M tokens). The colored fill shows how much of that capacity each strategy actually consumes per query. Pure RAG pays for almost nothing per query but depends on retrieval precision; pure long context is the inverse; the wide-net hybrid is where most production systems will settle.

Each card uses the same context-capacity outline (representing 1M tokens). The colored fill shows how much of that capacity each strategy actually consumes per query. Pure RAG pays for almost nothing per query but depends on retrieval precision; pure long context is the inverse; the wide-net hybrid is where most production systems will settle.

The architectural shift isn't huge, but it's real. You stop optimizing for retrieval precision and start optimizing for what to include. Different problem, different tools.

One open question worth flagging. Dense long-context models exhibit the "lost in the middle" effect (Liu et al., 2023): recall is strong for tokens near the beginning and end of the prompt and weaker for tokens buried in the middle. With DSA, the recall behavior in the middle of a 1M-token prompt depends on whether the lightning indexer reliably picks the right ~2,048 tokens. If the indexer is well-calibrated across positions, DSA might actually improve mid-context recall over dense attention by focusing the expensive computation where it matters. If the indexer has positional biases, the lost-in-the-middle effect could get worse instead of better. We don't yet have public evals at 1M context that clearly answer this, and it's the question I'd most want a DeepSeek engineer to publish a needle-in-a-haystack-style report on.

From the hardware side, which is where my attention sits, the main bottleneck moves. With DSA, the main cost is no longer attention compute. It's the lightning indexer running in FP8, top-k selection at scale, and KV cache management for million-token sequences. Memory bandwidth, KV cache eviction policies, and how the indexer's outputs are pipelined become the new bottlenecks. Anyone optimizing serving infrastructure for the old "short context, expensive attention" model is solving the wrong problem.

What this means for accelerator design

If you're designing or evaluating an AI accelerator in 2026, the V4 architecture is a useful stress test. A chip that's optimized for the previous generation's workload — dense GEMMs over big tensors, long contiguous reads from HBM, regular access patterns — will struggle with the V4 hot paths. The performance-critical kernels for a DSA-style stack live somewhere else.

A few of the new pressure points worth designing for:

- KV cache hierarchy. With MLA, the cache is small enough that more of it can live in higher levels of the memory hierarchy (HBM today, on-package SRAM tomorrow). The accelerators that win on long-context inference will be the ones that can keep more of the working KV state closer to compute.

- Sparse gather/scatter. Once top-k picks 2,048 tokens out of a million, the actual attention step needs to pull K and V vectors from non-contiguous positions in the cache. Standard contiguous-read DMA engines don't handle this well; you either copy the selected entries to a contiguous staging area first, or you need a gather-capable load unit.

- Top-k as a first-class operation. A fast top-k over a long sequence dimension is non-trivial. Accelerators with hardware sort or median-selection primitives have a real advantage here; everyone else implements it as a stack of bitonic sorts or approximate top-k.

- FP8 accumulation. Hopper's design choice — FP8 inputs, FP32 accumulation in the matmul — is now a baseline expectation. Accelerators that can only accumulate in BF16 either have to restructure the math or accept measurable precision loss in long training runs.

- Compiler and runtime work. The decompress-then-project step in MLA, the fused indexer+top-k in DSA, the variable per-query sparsity — these don't slot into the abstractions PyTorch's compiler stack was built around. Backend compiler and runtime teams end up writing new primitives and arguing with their schedulers about them.

The systems thesis underneath all of this: skipping work is now a first-class architectural goal, not a clever optimization. The accelerator stacks that internalize that will keep up. The ones that don't will spend the next two years catching up.

Where the cost actually goes

Parameter count gets the coverage. Activation pattern is what determines what the model actually costs to run, and it's what decides whether a frontier model is economically viable at all. V4 is the clearest current example: a 1.6 trillion-parameter model that serves at roughly the cost of a well-engineered 49B dense model, with attention costs that scale far more gently than dense attention at long context. Every layer of the stack is engineered around skipping what doesn't matter.

The labs that absorb this lesson keep shipping. The ones that don't keep training bigger dense models and wondering why their inference margins disappear.

References

[1] DeepSeek-AI, "DeepSeek V4 Preview Release," DeepSeek API news, April 24, 2026.

[2] DeepSeek-AI, "DeepSeek-V2: A Strong, Economical, and Efficient Mixture-of-Experts Language Model," arXiv:2405.04434, May 2024.

[3] DeepSeek-AI, "DeepSeek-V3 Technical Report," arXiv:2412.19437, December 2024.

[4] DeepSeek-AI, "DeepSeek-R1: Incentivizing Reasoning Capability in LLMs via Reinforcement Learning," arXiv:2501.12948, January 2025.

[5] DeepSeek-AI, "DeepSeek-V3.2: Pushing the Frontier of Open Large Language Models," arXiv:2512.02556, December 2025 (introduces DeepSeek Sparse Attention).

[6] Stanford HAI, "The 2026 AI Index Report," Stanford Institute for Human-Centered AI, 2026.

[7] SemiAnalysis, "Huawei AI CloudMatrix 384 — China's Answer to NVIDIA GB200 NVL72," 2025. (Industry analysis of Huawei's Ascend training cluster strategy and its position relative to NVIDIA rack-scale systems.)

Discussion

Sign in with GitHub to leave a comment or react. Threads are public and live in this site's GitHub Discussions.