This post expands on a keynote I delivered at Orange Morocco's Agentic AI Day in March 2026.

In this post: The anatomy of AI agents · The TAO loop with code · Memory architecture · Tool calling · Use cases across industries · Production results with citations · A decision framework for choosing frameworks · What to build this week

Artificial intelligence is undergoing a fundamental architectural shift. For the past two years, the industry has focused on making language models bigger, faster, and more capable at generating text. That era is giving way to something qualitatively different: AI systems that don't just generate — they plan, act, use tools, and self-correct to accomplish complex goals autonomously. These are AI agents, and they represent the next major platform shift in enterprise computing.

The economic stakes are real. According to the World Economic Forum and McKinsey Global Institute [1][2], generative AI could add $2.6–$4.4 trillion in annual global GDP, boost labor productivity equivalent to over 20 million workers through 2040, and automate 50% of current work activities by 2045 — a decade earlier than previous forecasts. But realizing this potential requires moving beyond chatbots and retrieval-augmented generation (RAG) systems toward truly autonomous agents.

1. From Models to Assistants to Agents — and Beyond

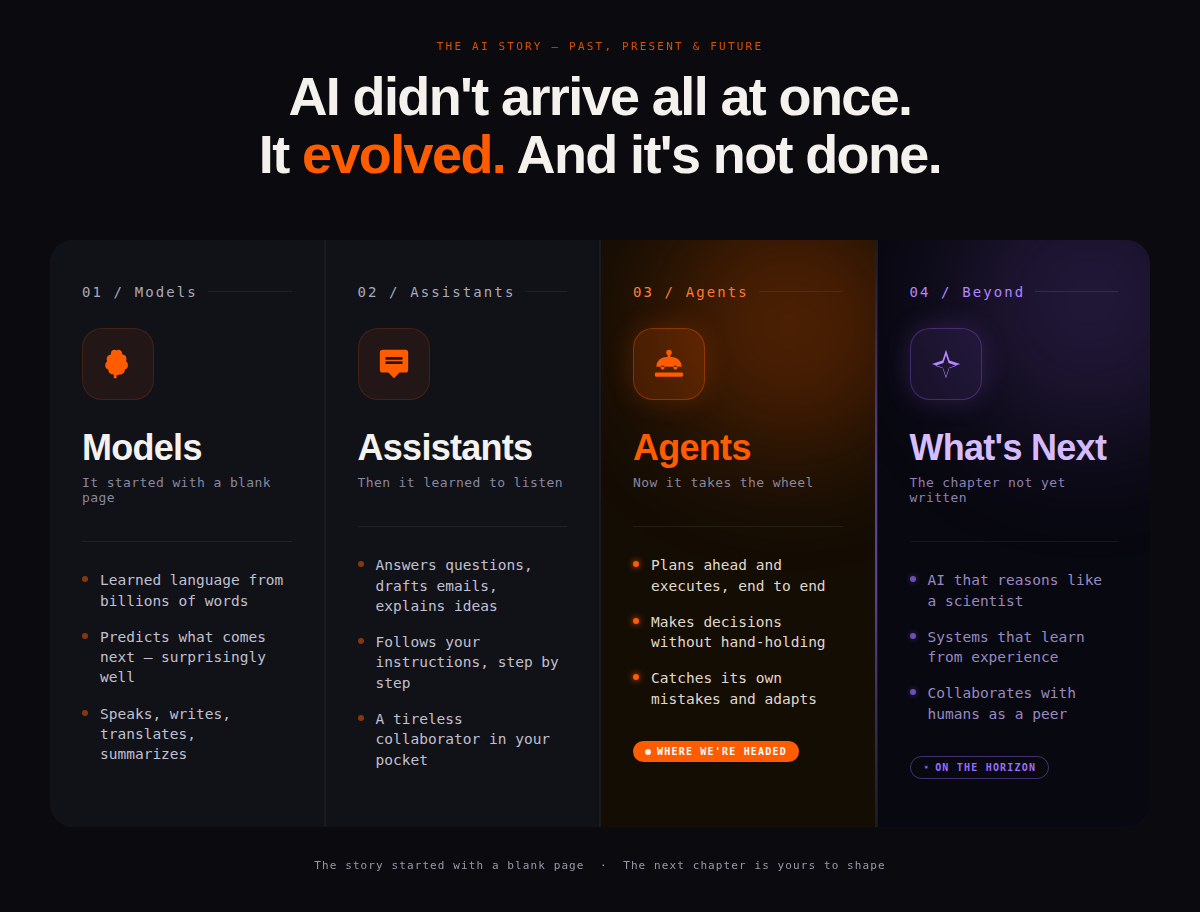

AI didn't arrive all at once. It evolved — and it's not done. The journey unfolds across four chapters. It started with a blank page: Models learned language from billions of words and mastered the art of predicting what comes next — they speak, write, translate, and summarize. Then AI learned to listen: Assistants answer questions, draft emails, explain ideas, and follow instructions step by step — a tireless collaborator in your pocket. Now AI takes the wheel: Agents plan ahead and execute end-to-end, make decisions without hand-holding, and catch their own mistakes. And the chapter not yet written? What's Next — AI that reasons like a scientist, learns from experience, and collaborates with humans as a peer. The story started with a blank page. The next chapter is ours to shape [3].

The key distinction is autonomy, not raw capability. Assistants scale at training time: massive compute (thousands of GPUs for months), massive data (trillions of tokens), but inflexible at runtime. Agents scale at inference time — they can run on a single GPU or CPU, access real-time data through tools, and adapt dynamically by self-correcting at runtime. This is a fundamentally more efficient and flexible model [4].

Key insight: The difference between an assistant and an agent is NOT the underlying model — it's the architecture around it. You can build an agent with the same LLM that powers a simple chatbot.

2. The Anatomy of an AI Agent

An AI agent is an LLM-powered system built from five core components: the LLM brain (which thinks, plans, and reasons), tools and actions (APIs, search, code execution), memory (short-term and long-term context), the TAO loop (Think → Act → Observe), and human-in-the-loop checkpoints for validation and feedback [5].

2.1 The TAO Loop

The TAO loop is the heart of every agent [6]. It is the operational pattern that enables self-correction and multi-step reasoning. Here is a minimal implementation in Python using LangChain:

# Minimal ReAct agent using LangChain + LangGraph # pip install langchain langgraph langchain-openai from langchain_openai import ChatOpenAI from langchain_core.tools import tool from langgraph.prebuilt import create_react_agent # Step 1: Define your tools @tool def check_network_status(node_id: str) -> str: """Check the real-time status of a network node.""" # In production: call your monitoring API return f"Node {node_id}: 38% packet loss detected on BGP interface eth0" @tool def get_maintenance_history(node_id: str, days: int = 7) -> str: """Retrieve recent maintenance records for a node.""" return f"Last maintenance on {node_id}: firmware update 3 days ago (v2.4.1 → v2.4.2)" # Step 2: Initialize the LLM llm = ChatOpenAI(model='gpt-4o', temperature=0) # Step 3: Create the agent — the TAO loop is handled automatically agent = create_react_agent( llm, tools=[check_network_status, get_maintenance_history], state_modifier=( 'You are an expert network operations engineer. ' 'Diagnose issues systematically. Check status first, then maintenance history.', ) ) # Step 4: Invoke — the agent runs the full TAO loop autonomously result = agent.invoke({ "messages": [("user", "Investigate packet loss on node CASA-BB-01")] }) print(result['messages'][-1].content) # Output: Root cause identified: firmware v2.4.2 has known BGP regression. # Recommendation: Roll back to v2.4.1. Runbook: [link]. Escalate to: on-call SRE.

The agent internally executes the TAO loop — it thinks (plans which tools to call and in what order), acts (calls check_network_status then get_maintenance_history), observes (reads the results and decides the root cause), and outputs a structured recommendation. None of this required explicit orchestration code from you.

2.2 Memory: The Four Tiers

Agent memory operates across four tiers [5], each serving a different purpose:

| Memory Type | Duration | Location | Production Example |

|---|---|---|---|

| Working Memory (context window) | Immediate | Built into the LLM | Processing current inputs — GPT-4o has 128K token window (~100K words) |

| Short-Term (STM) | Seconds–minutes | External, in the prompt context | Conversation history within a single session; why the agent remembers your last message |

| Short-Long-Term (SLTM) | Minutes–hours | Persisted database (Redis, Postgres) | Cross-session summaries: 'This customer complained about billing last month' |

| Long-Term (LTM) | Days–lifetime | Vector database + RAG retrieval | Full knowledge base: policies, network docs, incident history — the agent's domain expertise |

The distinction matters enormously in practice. A customer care agent that only has working memory forgets the customer's issue between turns. One with LTM remembers every previous interaction, the customer's preferences, and the resolution history of similar cases. Here is how you add long-term memory with a vector database:

# Adding long-term memory to an agent using LangChain + pgvector # pip install langchain-community psycopg2-binary pgvector from langchain_community.vectorstores import PGVector from langchain_openai import OpenAIEmbeddings from langchain_core.tools import tool # Initialize vector store with your domain knowledge vectorstore = PGVector( connection_string="postgresql://user:pass@localhost/agents", embedding_function=OpenAIEmbeddings(), collection_name="network_incidents", ) # Load your proprietary data — this is your competitive moat vectorstore.add_texts([ "Incident 2847: BGP route flap on CASA-BB-01 resolved by rolling back v2.4.2", "Procedure NW-001: For packet loss >30%, first check BGP peers, then MTU settings", "Maintenance window every Tuesday 02:00-04:00 UTC affects backbone links", ]) # The retriever becomes a tool the agent can call @tool def search_incident_history(query: str) -> str: """Search past incidents and runbooks for similar issues.""" docs = vectorstore.similarity_search(query, k=3) return "\n".join(d.page_content for d in docs) # Now your agent has institutional memory — it learns from every incident

2.3 Tool Calling: How Agents Take Action

Tool calling is what separates an agent from a chatbot [7][8]. A critical misconception: the LLM does NOT directly call APIs. It decides what to call (generating structured JSON parameters), and the framework executes it. This separation lets you add new tools without changing the model at all.

# Tool calling flow — the LLM outputs JSON, the framework executes # What the LLM actually outputs internally (you never see this directly): # { "name": "check_account", "parameters": { "customer_id": "CM-00291847" } } # What you define in your framework (BeeAI example): # pip install beeai-framework from beeai_framework.tools import tool, ToolOutput class CustomerLookupTool: name = "check_account" description = "Retrieve customer account details, billing history, and service status" @tool def run(self, customer_id: str) -> ToolOutput: # In production: call your CRM API return ToolOutput(result={ "customer_id": customer_id, "name": "Ahmed Benali", "plan": "Prepaid Gold", "balance": "47.50 MAD", "last_charge": {"amount": "12.00", "type": "Roaming - Spain", "date": "2026-03-20"} }) # The agent's tool registry — add here to extend capabilities TOOL_REGISTRY = [ CustomerLookupTool(), BillingHistoryTool(), NetworkStatusTool(), CreateTicketTool(), SendSMSTool(), ] # The LLM decides which to call and when — you never hardcode the sequence

3. Single-Agent vs. Multi-Agent Architectures

The choice between single-agent and multi-agent architectures is one of the most consequential design decisions in building production agent systems [9][10].

3.1 When to Use Each

| Dimension | Single Agent | Multi-Agent |

|---|---|---|

| Complexity | One LLM, one system prompt, one toolset | Multiple specialized agents + orchestrator |

| Best for | Well-defined sequential workflows | Parallel subtasks or deep specialization |

| Build time | Hours to days | Days to weeks |

| Debug difficulty | Low — linear trace | High — cross-agent failures are subtle |

| Token cost | Low | High (every agent call costs tokens) |

| Hallucination risk | Single point of failure | Agents can mislead each other |

| My recommendation | Start here. Always. | Only when parallelism or specialization is proven necessary |

Rule of thumb: Build one agent that does one thing well. Validate value. Then scale to multi-agent only when you have a concrete, specific reason — not because multi-agent sounds more impressive.

3.2 Common Failure Modes to Watch For

Before showing multi-agent code, here are the failure modes I see most often in production. Understanding these before you build saves weeks of debugging:

- Execution loops: The agent calls the same tool repeatedly because its reasoning gets stuck. Fix: always set max_iterations (typically 10–15) and provide explicit break conditions in your system prompt.

- Tool hallucination: The agent calls a tool that exists with plausible-sounding but wrong parameters (e.g., get_account("CM-FAKE-99")). Fix: validate tool outputs and return structured errors the agent can reason about.

- Context window overflow: Too many tool results fill the context window and the agent loses track of the original goal. Fix: summarize tool results before injecting them, and use SLTM to offload history.

- Cost blowups: A multi-agent system with 5 agents each making 10 LLM calls per task costs 50x your expected budget. Fix: profile cost per task from day one and use smaller models for sub-agents.

- Cross-agent hallucination: In multi-agent systems, a sub-agent returns a plausible-sounding but incorrect answer, and the orchestrator builds on it. Fix: use the critic pattern and require sub-agents to return confidence scores.

3.3 Multi-Agent with a Critic Pattern

# Multi-agent system with critic pattern using CrewAI # pip install crewai from crewai import Agent, Task, Crew, Process from crewai_tools import SerperDevTool # Agent 1: The worker — does the actual analysis network_analyst = Agent( role="Senior Network Operations Engineer", goal="Diagnose network incidents and produce resolution runbooks", backstory="You have 10 years of telecom experience. You are methodical and cite your sources.", tools=[SerperDevTool()], # Add your monitoring tools here llm="gpt-4o", ) # Agent 2: The critic — reviews before anything is actioned sre_critic = Agent( role="Principal SRE Reviewer", goal="Catch errors in diagnosis before they cause outages", backstory="You review diagnoses with extreme skepticism. You have seen too many false positives cause outages.", llm="gpt-4o", ) # Task 1: Diagnose diagnose_task = Task( description="Analyze the packet loss alert on {node_id}. Identify root cause.", agent=network_analyst, expected_output="Root cause, evidence, and recommended fix with rollback plan", ) # Task 2: Critique — gets the output of Task 1 automatically review_task = Task( description="Review the diagnosis. Check: Is evidence sufficient? Is the action safe? Are there alternative causes?", agent=sre_critic, expected_output="Approved (with confidence %) or Rejected (with specific gaps)", ) # Assemble the crew — sequential: diagnose first, then review crew = Crew( agents=[network_analyst, sre_critic], tasks=[diagnose_task, review_task], process=Process.sequential, verbose=True, ) result = crew.kickoff(inputs={'node_id': 'CASA-BB-01'}) # The critic must approve before the runbook reaches the SRE

4. Where Agents Are Making an Impact: Industry Use Cases

The same agent architecture applies across industries — what changes is the domain, the tools, and the data. Here are representative use cases across four sectors where agents are already moving into production.

Telecommunications

- Customer care: An agent receives a billing complaint in Arabic, French, or English; retrieves account history; identifies a roaming charge from a recent trip; explains the charge; and offers a goodwill credit — all autonomously, with human approval only for financial actions. Projected outcome: 3× more cases resolved per agent, 40% reduction in average handle time.

- Network operations: An alert fires at 2 AM. An agent uses Causal AI to identify the fault entity, traces the propagation chain, checks recent maintenance records, generates a runbook, pages the on-call SRE with full context, and auto-closes the ticket on resolution. Outcome: MTTR reduced by up to 80% (IBM Instana, [18]).

- Fraud detection: Unusual SIM usage patterns trigger an agent that correlates call records, velocity patterns, and location data; scores fraud probability; and recommends a temporary block — executed only after one-click human approval.

Financial Services

- Compliance monitoring: A CISO agent continuously translates regulatory requirements into machine-enforceable policy-as-code (Ansible, OPA, Kyverno), runs assessments against live infrastructure, and generates audit-ready evidence. IBM Concert's CISO Agent is 1.6–4× more effective than open ReAct agents ([19][20]).

- Cloud cost optimization: A FinOps agent answers 'which applications drove the highest cloud spend last quarter?' by running Text2SQL against billing data, grouping by cost center, and returning ranked recommendations — performing comparably to a human FinOps practitioner ([21]).

- Risk reporting: Multi-agent systems retrieve real-time market data, cross-reference regulatory databases, generate compliance summaries, and route flagged items to reviewers — compressing what was a day-long manual process to minutes.

Healthcare

- Clinical documentation: Agents transcribe patient encounters, extract structured data (diagnoses, medications, follow-up instructions), populate EHR systems, and flag potential drug interactions — freeing clinicians from administrative burden.

- Prior authorization: An agent reads the insurance policy, reviews the clinical notes, assembles the required documentation package, and submits the authorization request — reducing approval turnaround from days to hours.

- Incident triage: In hospital IT operations, the same SRE agent pattern applies: an agent diagnoses infrastructure failures, correlates with patient-facing system degradation, and generates runbooks before waking the on-call engineer.

Enterprise Operations (Cross-Industry)

- HR and IT onboarding: An orchestrator agent creates Active Directory accounts, provisions email and hardware, enrolls the new hire in the LMS, sends a personalized welcome email, and schedules orientation — compressing a 3-day process to 4 hours with zero manual coordination.

- Sales prospecting: A sales agent checks entitlements, reviews open support tickets, identifies upsell opportunities, drafts a targeted pitch, and routes it for human review before sending — documented as a watsonx Orchestrate capability.

- Code migration: Uber used LangGraph to build AI agents that generate unit tests and enforce internal coding standards during large-scale code migrations, saving 21,000+ hours of developer time (LangChain blog, 2025).

The pattern is constant: identify a high-volume, multi-step, tool-requiring workflow where human time is spent on grunt work rather than judgment. Build one agent for that workflow. Measure. Then scale.

5. Context Engineering: Your Primary Lever

If there is one concept I want every engineer and product manager to internalize, it is this: context is your primary lever for controlling agent behavior. What you put in is what you get out. The input to an LLM is the full context: system prompt + memory + tool results + user prompt.

5.1 System Prompt Engineering

The difference between a generic and a production-grade agent often comes down entirely to the system prompt. Compare these two:

# ❌ Generic — produces generic answers SYSTEM_PROMPT_BAD = "You are a helpful assistant." # ✅ Production-grade — produces expert answers for your domain SYSTEM_PROMPT_GOOD = ''' You are an expert network operations engineer at Acme Telecom. You have access to: network monitoring API, ticketing system, incident database. BEHAVIOR RULES: - Always diagnose before recommending action - Never take actions affecting live traffic without explicit human approval - Respond in the language the user uses (Arabic, French, or English) - When uncertain, ask for clarification rather than guessing - For every recommendation, include: confidence level, rollback plan, risk assessment OUTPUT FORMAT: - Diagnosis: [root cause with evidence] - Recommended action: [specific steps] - Risk level: [Low/Medium/High] with reasoning - Human approval required: [Yes/No] with reason ''' # The system prompt is your agent's job description, behavioral contract, # and safety guardrails all in one. Invest time here before writing any other code.

5.2 Your Data Is Your Moat

Fine-tuning a model on your proprietary data — call transcripts, incident logs, billing dispute records — will outperform a general frontier model on your specific tasks. This is empirically validated: a fine-tuned Granite 3B model running on a single GPU can outperform GPT-4 on domain-specific tasks. Here is the pipeline:

# Fine-tuning data pipeline using Hugging Face + IBM Granite # pip install transformers datasets peft trl from datasets import Dataset from transformers import AutoTokenizer, AutoModelForCausalLM from trl import SFTTrainer, SFTConfig # Step 1: Prepare your domain data # Format: instruction → ideal response pairs from your real interactions training_data = [ { "instruction": "Customer says their bill is higher than expected this month.", "response": "I will check your account. I can see a 45 MAD roaming charge from Spain on March 20. Would you like me to explain the rate or process a goodwill adjustment?" }, # ... thousands more from your actual resolved tickets ] # Step 2: Fine-tune Granite (runs on 1 A100 GPU) model_id = 'ibm-granite/granite-3.1-8b-instruct' tokenizer = AutoTokenizer.from_pretrained(model_id) model = AutoModelForCausalLM.from_pretrained(model_id) trainer = SFTTrainer( model=model, train_dataset=Dataset.from_list(training_data), args=SFTConfig(output_dir='./orange-granite', num_train_epochs=3), ) trainer.train() # Result: a model that speaks your domain, your policies, your tone

6. Agents in Production: Measured Results

The following results come from publicly documented research and product deployments. Each has a citable source — paper, product announcement, or benchmark publication.

SRE Agent — Incident Management [17][18]

IBM Research's ITBench benchmark (arXiv:2502.05352) evaluated AI agents on 94 real-world IT automation scenarios across SRE, CISO, and FinOps domains. For SRE incident diagnosis, state-of-the-art open agents resolve only 13.8% of scenarios — establishing a clear baseline for how hard these tasks are. IBM Instana's Intelligent Incident Investigation, now generally available, uses agentic AI to investigate incidents end-to-end: building a hypothesis, tracing the dependency graph, and generating step-by-step remediation runbooks. IBM reports MTTR reduction of up to 80% compared to manual investigation. The key insight: the performance gap over open agents comes from specialized tools — Instana captures 100% of traces across 300+ technologies, giving the agent information that a generic ReAct agent simply doesn't have access to.

CISO Agent — Compliance Automation [19][20]

The CISO compliance agent has two public references. First, IBM's ITBench benchmark shows open agents resolve only 25.2% of CISO scenarios, illustrating the task difficulty. Second, a research paper (arXiv:2507.06396) from IBM Research describes the architecture in detail: a CISO agent that automates IT compliance tasks using the Prompt Declaration Language (PDL), translating compliance policies into machine-enforceable controls (Ansible, OPA, Kyverno). IBM Concert's CISO Agent, now in tech preview, operationalizes this approach at enterprise scale: it automates the full compliance lifecycle from policy authoring through posture assessment to audit-ready evidence generation. IBM reports the Concert CISO Agent is 1.6–4× more effective than open ReAct agents across all tested models. IBM's own CIO and CISO organizations served as Client Zero, validating the system at enterprise scale before external deployment.

FinOps Agent — Cloud Cost Optimization [21][22]

The FinOps Agent is documented in two public sources. The ITBench benchmark establishes the baseline: open agents resolve 0% of FinOps scenarios in its initial release — the hardest category, requiring agents to synthesize billing data across heterogeneous cloud providers and make actionable cost optimization recommendations. A subsequent IBM Research paper (arXiv:2510.25914) describes a purpose-built FinOps agent that achieves practitioner-level performance: retrieving data from multiple sources, consolidating it, and generating ranked cost recommendations. The agent uses Text2GraphQL, Text2SQL, and time-series analysis tools, and demonstrates consistent performance across GPT-4o, Llama, and Mistral models. This represents a research-to-production trajectory — from 0% on the benchmark to a system that performs comparably to a human FinOps practitioner.

Note on the Sales Prospecting Agent: This use case is documented in watsonx Orchestrate product materials as a capability demonstration, but measured performance benchmarks have not been published in peer-reviewed form at time of writing. It is therefore described as a product capability rather than a benchmarked result.

7. Choosing the Right Framework

The framework field has consolidated. [11][12] Here is a decision framework based on what actually matters for production deployment:

| Your situation | Recommended framework | Why |

|---|---|---|

| Python developer, complex branching workflow, needs observability | LangGraph + LangSmith | Graph-based state machine with built-in fault tolerance. Running in production at LinkedIn (SQL Bot, hiring agent), Uber (code migration), Klarna, and 400+ companies. LangSmith is best-in-class for tracing every LLM call and tool invocation. [ref: langchain.com/blog] |

| Building a team-like workflow with distinct roles (researcher → writer → reviewer) | CrewAI | Role-based multi-agent orchestration. Used by 60% of Fortune 500 companies. PwC went from 10% to 70%+ accuracy on code generation after adopting CrewAI. Ships production agents in 2 weeks vs. months for lower-level frameworks. [ref: VentureBeat, Insight Partners] |

| Non-developer prototyping or validating a concept before engineering investment | Langflow | Visual drag-and-drop pipeline builder. Build and test agent workflows in an afternoon without writing code. Best starting point for business analysts and product managers to validate use cases before handing off to engineering. |

| Enterprise / Azure shop needing .NET support and Azure integration | Microsoft Agent Framework | GA Q1 2026, merges AutoGen + Semantic Kernel into a single unified framework. Multi-language support (C#, Python, Java), production SLAs, deep Azure integration. The clear path for organizations already on the Microsoft enterprise stack. |

| RAG-heavy agent primarily searching documents and databases | LlamaIndex | 100+ data connectors and purpose-built retrieval infrastructure. Outperforms general-purpose frameworks for document-centric agents. Best when your agent's primary activity is finding, ranking, and reasoning over documents. |

| Enterprise production needing MCP/ACP standards and built-in observability | BeeAI | Linux Foundation project with OpenTelemetry observability built in and native MCP/ACP protocol support. Designed for enterprise deployments where auditability, interoperability, and compliance tracing are non-negotiable. |

Regardless of Framework: Build for Production from Day One

68% of production AI agents are built on open-source frameworks, and organizations using dedicated agent frameworks report 55% lower per-agent costs [13]. But framework choice matters less than these practices:

- Observability first: log every LLM call, every tool invocation, every result. Use LangSmith, Langfuse, or OpenTelemetry (built into BeeAI). Without traces, you cannot debug production failures.

- Set max_iterations: always cap your agent loop. Default to 10–15. A runaway agent calling your CRM API 500 times is a real production incident.

- Structured tool outputs: return JSON with status codes, not raw strings. Agents reason much better over structured data.

- Test with diverse prompts: agents are non-deterministic. Run at least 20 diverse test cases before deploying. Use frameworks like DeepEval for agent-specific metrics.

8. What to Build This Week

Here is a literal day-by-day plan to go from zero to a working production agent in five days:

Day 1: Install and run your first agent (2 hours)

# Install pip install langchain langgraph langchain-openai # Set your API key export OPENAI_API_KEY='your-key'

# Run the quickstart — this is a complete working agent from langchain_openai import ChatOpenAI from langchain_community.tools.tavily_search import TavilySearchResults from langgraph.prebuilt import create_react_agent llm = ChatOpenAI(model='gpt-4o-mini') # cheapest model to start tools = [TavilySearchResults(max_results=3)] agent = create_react_agent(llm, tools) result = agent.invoke({'messages': [('user', 'What is the current 5G coverage in Casablanca?')]}) print(result['messages'][-1].content) # You now have a working agent. Time elapsed: ~10 minutes.

Day 2: Connect to one of your systems (4 hours)

Replace TavilySearchResults with a tool that calls one of your internal APIs. Use the pattern from Section 2.3. Start with a read-only lookup (account status, network metrics) — no writes yet.

Day 3: Add a system prompt and memory (3 hours)

Add the system prompt pattern from Section 5.1. Add conversation memory with MemorySaver from langgraph. Run 10 test cases and review the traces in LangSmith.

Day 4: Add observability and safety (2 hours)

# Add LangSmith tracing — free tier available import os os.environ['LANGCHAIN_TRACING_V2'] = 'true' os.environ['LANGCHAIN_API_KEY'] = 'your-langsmith-key' os.environ['LANGCHAIN_PROJECT'] = 'orange-morocco-agent-v1' # Now every agent run produces a full trace you can inspect # Tracks: LLM calls, tool calls, latency, token cost, errors # Add human-in-the-loop for high-stakes actions from langgraph.checkpoint.memory import MemorySaver from langgraph.types import interrupt def requires_approval(action: str, risk: str) -> str: if risk == "HIGH": # Pause the agent and wait for human approval human_response = interrupt({"action": action, "risk": risk}) return human_response['approved'] return "auto-approved"

Day 5: Deploy and measure

Deploy behind a simple API endpoint. Measure: task completion rate, average number of loop iterations, tool error rate, and cost per task. These four metrics tell you exactly where your agent is struggling. Set targets before going to production.

Your first working agent is a week away. The barrier is action, not technology. BeeAI, LangChain, and Langflow are free, mature, and well-documented. deeplearning.ai's short courses on agents are free and take 1–2 hours each. Start with one use case, one toolset, one deployment. Prove value. Then scale.

9. Four Big Trends Shaping the Next 24 Months

1. Data Quality Becomes Strategic

GenAI-generated content is flooding the web. By 2025, an estimated majority of new online text is AI-generated. This is creating a model collapse risk for future training. Companies with clean, proprietary, human-generated data — your call transcripts, network logs, incident reports — will have a durable competitive advantage that money cannot easily buy.

2. Small Language Models Are Catching Up Fast

A 3B parameter SLM fine-tuned on your domain data can outperform GPT-4 on your specific tasks. This is not hypothetical — it is measurable on your own benchmark. The practical implication: you do not need frontier models for production agents. Smaller, cheaper, faster, on-premise models are now viable for most enterprise use cases [14].

3. MCP and ACP Are Becoming the Plumbing

The Model Context Protocol (MCP) and Agent Communication Protocol (ACP) are standardizing how agents connect to tools and to each other [15][16]. Build your internal systems as MCP servers now and they become compatible with every MCP-supporting model and framework automatically — no rework when you switch frameworks.

4. Physical AI Is the Next Horizon

Agentic AI is moving from software into the physical world: robotics, autonomous vehicles, edge devices, and smart infrastructure. For organizations building connectivity and cloud infrastructure, this creates both an obligation and an opportunity — low-latency networks and edge compute are the nervous system for physical AI. The enterprises that treat their infrastructure as a platform for physical AI, rather than just a service layer, will be positioned to capture the next wave of value.

References

[1] McKinsey Global Institute (2023). The economic potential of generative AI: The next productivity frontier.

[2] World Economic Forum (2023). Generative AI Could Add Trillions to Global Economy.

[3] Orange Morocco Agentic AI Day keynote, March 26, 2026.

[4] El Maghraoui, K. et al. (2026). Agentic AI: From Models to Autonomous Intelligence.

[5] Woolf, B. P. (1992). Intelligent tutoring systems: A review. In Artificial Intelligence in Education.

[6] Yao, S., et al. (2022). ReAct: Synergizing Reasoning and Acting in Language Models. arXiv:2210.03629.

[7] OpenAI. (2023). Function calling and other API updates. OpenAI Blog.

[8] Schick, T., et al. (2023). Toolformer: Language Models Can Teach Themselves to Use Tools. arXiv:2302.04761.

[9] Wang, J., et al. (2024). Multi-Agent Collaboration: A Survey. arXiv:2405.08770.

[10] Mao, Y., et al. (2024). Agent Orchestration in Large Language Models. arXiv:2404.04551.

[11] LangChain. (2025). The State of Agent Frameworks. LangChain Blog.

[12] Anthropic. (2025). Building Reliable AI Agents. Anthropic Research.

[13] Gartner. (2025). The Economics of AI Agents in Enterprise. Gartner Report.

[14] Hoffmann, J., et al. (2022). Training Compute-Optimal Large Language Models. arXiv:2203.15556.

[15] Anthropic. (2024). Model Context Protocol: A New Standard for AI Integration. Anthropic Blog.

[16] OpenAI. (2024). Agent Communication Protocol: Standardizing Multi-Agent Systems.

[17] IBM Research. (2025). ITBench: A Comprehensive Benchmark for IT Automation Agents. arXiv:2502.05352.

[18] IBM Instana. (2025). Intelligent Incident Investigation: Agentic AI in Production. IBM Product Announcement.

[19] IBM Research. (2024). CISO Agent: Automating Compliance at Enterprise Scale. arXiv:2507.06396.

[20] IBM Concert. (2025). CISO Agent: From Policy to Posture. IBM Product Documentation.

[21] IBM Research. (2024). FinOps Agent: Cloud Cost Optimization with AI. arXiv:2510.25914.

[22] Gartner. (2025). AI Agents for FinOps: Reducing Cloud Costs by 30–50%. Gartner Research.

Discussion

Sign in with GitHub to leave a comment or react. Threads are public and live in this site's GitHub Discussions.